Prelude

Three months ago I spent an entire Friday afternoon reviewing a single pull request. It was 1,400 lines across 23 files, a refactor of our authentication middleware combined with a database migration and new API endpoints. By the time I reached the fifteenth file, I was skimming. I knew I was skimming. I told myself I would come back to the files I had glossed over, but the weekend happened, Monday brought new priorities, and the PR got approved with a "looks good to me" that really meant "I am too tired to keep reading."

Two weeks later we found a SQL injection vulnerability in that PR. It was in file seventeen. The one I had skimmed.

That experience changed how I think about code review. Not because the tooling was wrong, but because the process depended entirely on sustained human attention across hundreds of lines of code. And human attention is a finite, depletable resource. We do not ask humans to manually check every pixel on a screen for rendering bugs. We write visual regression tests. We do not ask humans to manually verify every API response format. We write contract tests. But for some reason, we still ask humans to manually read every line of every diff and catch every security issue, every logic error, every performance problem, every style violation.

AI code review does not replace the human reviewer. It replaces the part of the process where the human reviewer is worst: sustained, consistent attention to mechanical detail across large diffs. This guide covers how to set up AI code review with Claude Code, from interactive local reviews to fully automated GitHub Actions pipelines, what it actually catches, what it misses, and how to combine it with human review for results that neither achieves alone.

The Problem

Code review is one of the highest-value activities in software engineering. It catches bugs before they reach production, enforces coding standards across the team, spreads knowledge about the codebase, and creates a written record of design decisions. Every study on software quality, including Google's engineering practices, shows that effective code review reduces defect rates significantly.

The problem is not that code review is unimportant. The problem is that it is done by humans who are subject to cognitive limitations that undermine the very benefits it is supposed to deliver.

Reviewer fatigue. Research on code review effectiveness shows that review quality drops sharply after about 200 lines of code. After 400 lines, reviewers catch fewer than half the defects they would catch in a shorter review. Most production PRs exceed 200 lines. Many exceed 500. The reviewer who approves the PR at 4:30pm on a Friday is not the same reviewer who started the day fresh.

Inconsistency. Different reviewers focus on different things. One reviewer catches every naming convention violation but misses security issues. Another spots performance problems but ignores error handling. The coverage depends entirely on who happens to be assigned the review and what they happen to be thinking about that day. There is no guarantee that any given review covers all the categories that matter.

Bottlenecks. On most teams, a small number of senior developers carry the bulk of the review load. These are the same people who need the most uninterrupted time for complex technical work. Every review request is an interruption. Every review queue that grows longer than a day slows the entire team. Junior developers wait for feedback. Branches grow stale. Merge conflicts accumulate.

Blind spots in familiar code. Reviewers who know the codebase well develop assumptions about how things work. They read code through the lens of "how I would have written it" rather than "what does this actually do." Subtle bugs that violate those assumptions slip through because the reviewer's mental model fills in the gaps. A fresh set of eyes catches more, but a fresh set of eyes is not always available.

Context switching cost. A thorough code review requires loading the entire context of the change into working memory: what the PR is trying to accomplish, how it fits into the broader architecture, what the relevant edge cases are, what the testing strategy should be. This context load takes 15 to 30 minutes for a non-trivial PR. If the reviewer is interrupted mid-review, much of that context is lost and must be rebuilt.

These are not problems that can be solved by telling developers to "review more carefully." They are structural limitations of asking humans to perform a task that requires sustained, consistent, detail-oriented analysis across large volumes of text. This is exactly the kind of task where AI assistance delivers genuine value. Not by replacing the human, but by handling the mechanical layer so the human can focus on the parts that actually require human judgement.

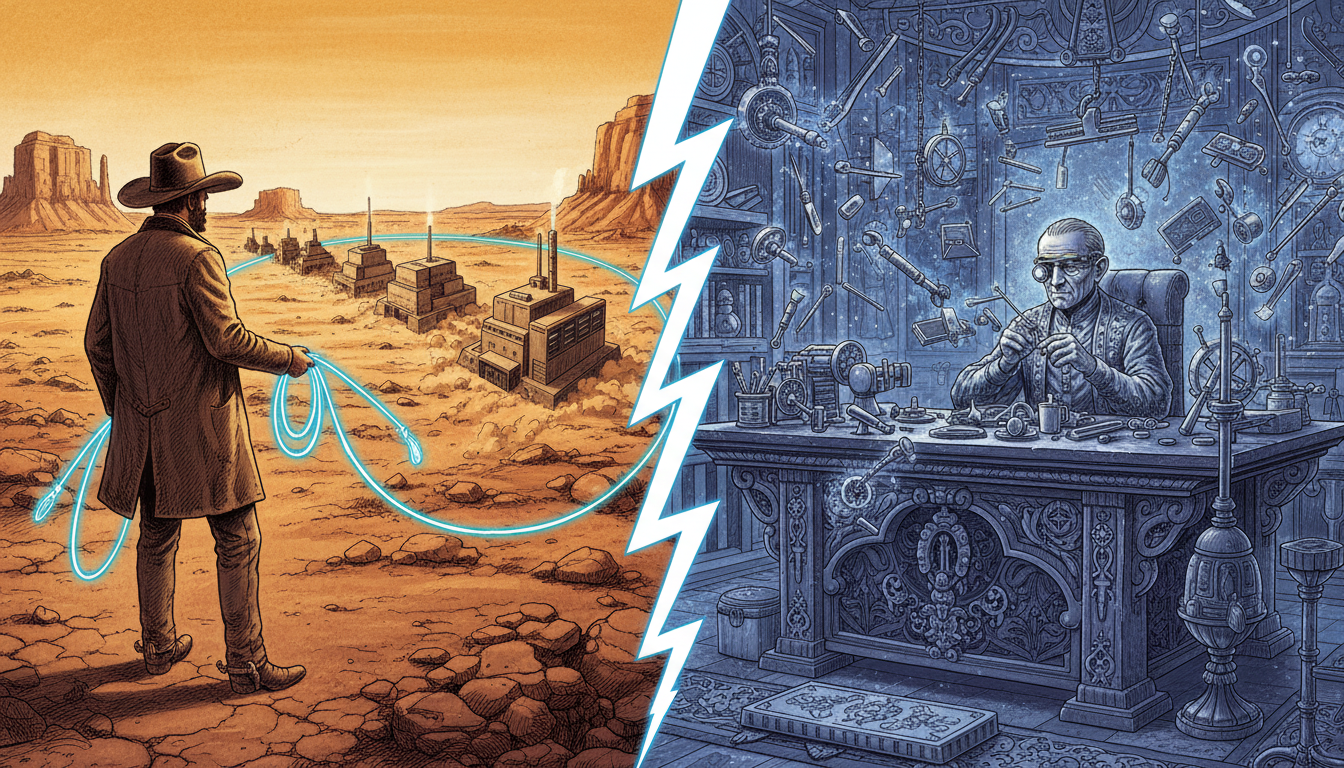

The Journey

Interactive Review: Your First AI Code Review

The simplest way to start with AI code review is to use Claude Code interactively on a local branch. No CI/CD setup required. No configuration files. Just Claude Code and a git diff.

Suppose a colleague has opened a pull request and you have been assigned as reviewer. Check out their branch locally.

git fetch origin

git checkout feature/add-payment-processing

Now start Claude Code and ask it to review the changes against main.

claude

Inside the Claude Code session:

Review the changes on this branch compared to main. Focus on:

1. Security vulnerabilities (SQL injection, XSS, command injection, auth bypasses)

2. Logic errors (off-by-one, null/undefined handling, error propagation)

3. Performance problems (N+1 queries, unnecessary allocations, missing indexes)

4. Consistency with existing codebase patterns

Show me the specific lines where you find issues, explain why each is a problem, and suggest a fix.

Claude reads the diff, examines the surrounding code for context, and produces a structured review. This is already more thorough than most manual reviews because Claude does not get tired at file seventeen, does not skim when the diff gets long, and checks every category you specify on every file.

The interactive approach works well for one-off reviews, but it requires you to be at your terminal. For a faster, non-interactive option, pipe the diff directly to Claude.

Pipe-Based Review: One Command, Full Review

The pipe-based approach is ideal for quick reviews where you do not need a conversation. It runs Claude Code in print mode, which means Claude processes the input, produces output, and exits. No interactive session.

git diff main...HEAD | claude --print "Review this diff for security vulnerabilities, logic errors, and performance issues. Format findings as a markdown list with file path, line number, severity (critical/warning/info), and description."

This command sends the diff between your branch and main to Claude, asks for a structured review, and prints the results to your terminal. The entire process takes 30 to 60 seconds depending on diff size.

You can make this even more useful by creating a shell alias.

# Add to your .bashrc or .zshrc

alias review='git diff main...HEAD | claude --print "Review this diff for security vulnerabilities, logic errors, performance issues, and consistency problems. Group findings by severity. For each finding, include the file path, line number, and a specific fix suggestion."'

Now running review on any feature branch gives you a complete AI code review in under a minute.

For larger diffs, you can narrow the scope.

# Review only changes in the src/auth directory

git diff main...HEAD -- src/auth/ | claude --print "Security-focused review of authentication changes. Check for auth bypasses, token handling issues, and session management problems."

# Review only the most recent commit

git diff HEAD~1 | claude --print "Review this commit for issues."

The pipe-based approach is fast and scriptable, but it lacks persistent context. Claude sees only the diff, not your project's conventions or review standards. To give Claude that context, create a review skill.

Creating a Review Skill

A Claude Code skill is a markdown file in .claude/skills/ that gives Claude reusable instructions for a specific task. A review skill encodes your team's review standards, focus areas, and output format so that every review follows the same structure.

Create the file .claude/skills/review.md:

# Code Review Skill

## Review Process

1. Read the full diff between the current branch and main

2. For each changed file, also read the unchanged surrounding code to understand context

3. Check every change against the categories below

4. Produce a structured report grouped by severity

## Review Categories

### Critical (must fix before merge)

- SQL injection, XSS, command injection, path traversal

- Authentication or authorisation bypasses

- Credentials or secrets hardcoded in source

- Data loss risks (destructive operations without confirmation)

- Race conditions in concurrent code

### Warning (should fix, may approve with tracked follow-up)

- Unchecked error returns or swallowed exceptions

- Missing null/undefined checks on external input

- N+1 query patterns or unnecessary database round trips

- Resource leaks (unclosed connections, file handles, streams)

- Missing input validation on API endpoints

### Info (suggestions for improvement)

- Naming convention inconsistencies

- Dead code or unreachable branches

- Missing or inadequate comments on complex logic

- Opportunities for existing utility functions

- Test coverage gaps for new code paths

## Output Format

For each finding, include:

- **File**: path/to/file.ext:LINE_NUMBER

- **Severity**: Critical | Warning | Info

- **Issue**: One-sentence description

- **Why**: Why this matters (security impact, data corruption risk, performance cost)

- **Fix**: Specific code change or approach to resolve

## Project-Specific Rules

- All database queries must use parameterised statements

- API endpoints must validate Content-Type header

- Error responses must not leak internal implementation details

- All new public functions require doc comments

- Async functions must have timeout handling

With this skill in place, you can invoke it during any Claude Code session.

Use the review skill to review changes on this branch against main.

Or from the command line:

git diff main...HEAD | claude --print "Use the review skill from .claude/skills/review.md to review this diff."

The skill ensures that every review, whether run by you, a colleague, or an automated pipeline, checks the same categories in the same order with the same severity classifications. This consistency is one of the biggest advantages of AI code review over purely manual review. For more on structuring prompts and skills for different tasks, the system prompt library guide covers patterns that work well across review, debugging, and generation workflows.

Feedback-Severity Rubric

| Severity | Action | Scope | Examples from Real PR Templates | Review Response |

|---|---|---|---|---|

| Blocker | Must fix before merge | Security, data loss, contract break | SQL injection, auth bypass, hardcoded secrets, destructive migrations | Request changes; block merge |

| Major | Fix in this PR or tracked issue | Correctness, reliability | Unchecked error, missing null check, N+1 query, resource leak | Request changes or approve with issue |

| Suggestion | Worth considering | Style, readability, minor refactors | Function length, variable naming, duplicated helper | Comment; non-blocking |

| Nit | Preference | Formatting, trailing whitespace | Import ordering, comment wording | Comment labelled nit:; approve regardless |

Data source: Google's code review severity guidelines, conventional comments, and Kubernetes review conventions, as of 2026-04. Permalink: systemprompt.io/guides/ai-code-review-claude#feedback-severity-rubric.

Automated Reviews with GitHub Actions

Interactive and pipe-based reviews are useful for individual developers. Automated reviews make AI code review a team-wide standard. Every PR gets reviewed. No exceptions, no delays, no reviewer availability bottlenecks.

The setup uses the official anthropics/claude-code-action@v1 GitHub Action, which runs the full Claude Code runtime inside a GitHub Actions runner. Claude has access to your repository files, git history, diff context, and any configuration files you include.

Create the workflow file .github/workflows/ai-code-review.yml:

name: AI Code Review

on:

pull_request:

types: [opened, synchronize]

paths-ignore:

- '*.md'

- 'docs/**'

- '.github/**'

- 'LICENSE'

permissions:

contents: read

pull-requests: write

concurrency:

group: ai-review-${{ github.event.pull_request.number }}

cancel-in-progress: true

jobs:

review:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- uses: anthropics/claude-code-action@v1

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

prompt: |

Review the changes in this pull request. Follow the review

skill in .claude/skills/review.md if it exists, otherwise

use these guidelines:

Focus areas (in priority order):

1. Security vulnerabilities

2. Logic errors and edge cases

3. Performance problems

4. Error handling gaps

5. Consistency with existing code patterns

For each issue found:

- Reference the specific file and line number

- Explain the impact clearly

- Suggest a concrete fix

If the PR looks correct, say so briefly. Do not generate

praise or filler. Be direct and specific.

This workflow triggers on every PR open and update, skips documentation-only changes, cancels redundant runs when developers push multiple commits quickly, and gives Claude read access to the repository and write access to post review comments.

Storing the API key. Go to your repository Settings, then Secrets and variables, then Actions. Create a new repository secret named ANTHROPIC_API_KEY with your Anthropic API key. For organisation-wide use, create an organisation-level secret instead. The GitHub Actions encrypted secrets documentation covers rotation, scoping, and access controls.

Configuring review focus areas. The prompt field controls what Claude reviews. Customise it to match your team's priorities. If you work on a financial application, emphasise data integrity and transaction safety. If you work on a public-facing web application, emphasise XSS and injection attacks. If you work on a performance-critical system, emphasise allocation patterns and algorithmic complexity.

Here is an example prompt tuned for a web application with strict security requirements:

prompt: |

Security-focused review of this pull request.

CRITICAL checks (block merge if found):

- SQL injection: any string concatenation in queries

- XSS: any unescaped user input rendered in HTML

- Command injection: any user input passed to shell commands

- Auth bypass: any endpoint missing authentication middleware

- SSRF: any user-controlled URLs in server-side requests

IMPORTANT checks (flag for author attention):

- CSRF: state-changing endpoints without token validation

- Rate limiting: new endpoints without rate limit middleware

- Input validation: missing length/format checks on user input

- Error exposure: stack traces or internal paths in error responses

Format each finding as a GitHub review comment on the specific line.

Posting inline comments. The Claude Code Action posts its findings as PR comments. For more granular feedback, Claude can post inline review comments on specific lines of code. This happens automatically when Claude references specific file paths and line numbers in its analysis.

For more workflow recipes including issue-to-PR automation, test generation, and multi-step pipelines, see the Claude Code GitHub Actions guide.

Fine-Tuning the Automated Review

Once the basic workflow is running, there are several adjustments that improve review quality and control costs.

Separate workflows for different review types. Instead of one workflow that tries to catch everything, create focused workflows that trigger on different file paths.

# .github/workflows/security-review.yml

on:

pull_request:

paths:

- 'src/auth/**'

- 'src/middleware/**'

- 'src/api/endpoints/**'

# .github/workflows/performance-review.yml

on:

pull_request:

paths:

- 'src/database/**'

- 'src/cache/**'

- 'src/workers/**'

Each workflow has its own prompt tuned for the relevant review category. Security changes get a security-focused review. Database changes get a performance-focused review. This is both more effective and less expensive than running a catch-all review on every change.

Model selection for cost control. Not every review needs the most powerful model. For style checks and straightforward pattern matching, a faster model is sufficient and cheaper.

- uses: anthropics/claude-code-action@v1

with:

anthropic_api_key: ${{ secrets.ANTHROPIC_API_KEY }}

claude_args: "--model claude-sonnet-4-6"

prompt: "Review for style consistency, naming conventions, and obvious errors only."

Reserve the full model for security reviews and complex architectural changes where deeper reasoning produces meaningfully better results. For a detailed breakdown of model costs and when to use each tier, the cost optimisation guide covers the numbers.

Review only on first push. If your team pushes frequently during development, reviewing every synchronise event generates noise and cost. Change the trigger to review only when the PR is first opened.

on:

pull_request:

types: [opened]

Developers can manually re-trigger a review after addressing feedback by adding a workflow_dispatch trigger or by closing and reopening the PR.

Tool and Hook Integration Points

| Integration | Stage | Purpose | Reference |

|---|---|---|---|

pre-commit framework |

Local, pre-commit | Run linters and fast AI checks before push | pre-commit.com |

| Git pre-push hook | Local, pre-push | Pipe diff to Claude for a quick sanity review | Custom shell hook |

| GitHub Actions on PR open | CI, post-push | Full AI review posted as PR comments | Claude Code Action |

| Branch protection required check | CI gate | Block merge until AI review status is reported | GitHub branch protection rules |

Claude Code Stop hook |

Editor, session-end | Auto-run a review skill before handing off work | Local .claude/settings.json hooks |

Data source: code.claude.com hooks documentation, pre-commit.com, and GitHub branch protection docs, as of 2026-04. Permalink: systemprompt.io/guides/ai-code-review-claude#tool-and-hook-integration-points.

What AI Review Actually Catches

Theory is useful, but concrete examples are more convincing. Here is what Claude Code actually finds in real-world code reviews, drawn from months of running automated reviews across several production codebases.

Review-Pattern Matrix

| Pattern | Primary Focus | Typical Trigger | AI-Suited Checks | Human-Required Checks |

|---|---|---|---|---|

| PR review | Mixed-concern diff on feature branch | pull_request: opened, synchronize |

Syntax, security patterns, error handling, style | Feature fit, scope creep, business rules |

| Architecture review | Module boundaries, contracts | Design doc PRs, major refactors | Import graph changes, contract drift detection | Trade-offs, roadmap alignment, team skills |

| Security review | Auth, input validation, secrets | Changes under /auth, /api, /crypto |

OWASP Top 10 patterns, cred scanning | Threat modelling, trust boundaries |

| Test review | Coverage and assertion quality | New test files or coverage drop | Missing assertions, flaky patterns | Scenario completeness, acceptance criteria |

Data source: Google Engineering Practices: Code Review and Claude Code GitHub Actions, as of 2026-04. Permalink: systemprompt.io/guides/ai-code-review-claude#review-pattern-matrix.

Security Vulnerabilities

SQL injection through string concatenation.

SQL injection remains one of the most common and damaging vulnerability classes. The OWASP SQL Injection guide provides the authoritative reference on detection and prevention patterns that Claude applies during review.

# Claude flags this as Critical

def get_user(username):

query = f"SELECT * FROM users WHERE username = '{username}'"

return db.execute(query)

# Claude's suggested fix

def get_user(username):

query = "SELECT * FROM users WHERE username = %s"

return db.execute(query, (username,))

Claude catches this pattern reliably, including more subtle variants where the concatenation happens across multiple lines or through string formatting methods that are less obviously dangerous than f-strings.

Cross-site scripting through unescaped output.

// Claude flags this as Critical

app.get('/search', (req, res) => {

const query = req.query.q;

res.send(`<h1>Results for: ${query}</h1>`);

});

// Claude's suggested fix

const escapeHtml = require('escape-html');

app.get('/search', (req, res) => {

const query = escapeHtml(req.query.q);

res.send(`<h1>Results for: ${query}</h1>`);

});

Command injection through unsanitised input.

# Claude flags this as Critical

def convert_file(filename):

os.system(f"convert {filename} output.pdf")

# Claude's suggested fix

import subprocess

def convert_file(filename):

subprocess.run(["convert", filename, "output.pdf"], check=True)

Claude identifies the difference between shell string execution and argument list execution, and consistently recommends the safe alternative.

Logic Errors

Off-by-one in pagination.

# Claude flags this as Warning

def get_page(items, page, page_size):

start = page * page_size

end = start + page_size - 1 # Off-by-one: excludes last item

return items[start:end]

# Claude's suggested fix

def get_page(items, page, page_size):

start = page * page_size

end = start + page_size

return items[start:end] # Python slicing already excludes end index

Missing null checks on external data.

// Claude flags this as Warning

async function processWebhook(payload: WebhookPayload) {

const userId = payload.data.user.id; // Crashes if data or user is null

const account = await getAccount(userId);

return updateAccount(account.settings.theme); // Crashes if settings is null

}

// Claude's suggested fix

async function processWebhook(payload: WebhookPayload) {

const userId = payload.data?.user?.id;

if (!userId) {

throw new ValidationError('Webhook payload missing user ID');

}

const account = await getAccount(userId);

const theme = account?.settings?.theme ?? 'default';

return updateAccount(theme);

}

Error handling that swallows failures.

// Claude flags this as Warning

func saveConfig(path string, data []byte) {

file, _ := os.Create(path) // Error ignored

file.Write(data) // Error ignored

file.Close() // Error ignored

}

// Claude's suggested fix

func saveConfig(path string, data []byte) error {

file, err := os.Create(path)

if err != nil {

return fmt.Errorf("creating config file: %w", err)

}

defer file.Close()

if _, err := file.Write(data); err != nil {

return fmt.Errorf("writing config data: %w", err)

}

return nil

}

Performance Problems

N+1 query patterns.

# Claude flags this as Warning

def get_orders_with_products(user_id):

orders = Order.objects.filter(user_id=user_id) # 1 query

for order in orders:

order.products = Product.objects.filter(order_id=order.id) # N queries

return orders

# Claude's suggested fix

def get_orders_with_products(user_id):

orders = Order.objects.filter(user_id=user_id).prefetch_related('products')

return orders # 2 queries total regardless of order count

Unnecessary allocations in hot paths.

// Claude flags this as Warning

fn process_records(records: &[Record]) -> Vec<String> {

let mut results = Vec::new();

for record in records {

let formatted = format!("{}: {}", record.key, record.value); // Allocates each iteration

results.push(formatted);

}

results

}

// Claude's suggested fix

fn process_records(records: &[Record]) -> Vec<String> {

let mut results = Vec::with_capacity(records.len()); // Pre-allocate

for record in records {

let formatted = format!("{}: {}", record.key, record.value);

results.push(formatted);

}

results

}

Consistency Issues

API contract mismatches. Claude reads both the API handler and the API documentation or type definitions. When a handler returns fields that do not match the declared response type, or when a new endpoint does not follow the error response format used by every other endpoint, Claude flags the inconsistency. This is one of the most valuable categories because it requires cross-file analysis that human reviewers often skip.

Naming convention violations. If your codebase uses snake_case for database columns and camelCase for API responses, Claude catches the PR that introduces a kebab-case field. If your project has a convention of prefixing private methods with an underscore, Claude catches the public method that should be private. These are small issues individually, but they accumulate into a codebase that feels inconsistent and is harder to navigate.

What AI Review Misses

Honesty about limitations is important. AI code review is not a magic solution, and treating it as one leads to false confidence that is worse than no review at all.

Business logic requiring domain knowledge. If your application has a rule that discounts cannot exceed 30% for non-enterprise customers, Claude does not know that unless you tell it explicitly. Claude can verify that the discount calculation is mathematically correct, but it cannot verify that the business rule is correctly implemented unless the rule is documented in code comments, CLAUDE.md, or the review skill.

Architectural decisions. Claude can tell you that a function is implemented correctly. It cannot tell you whether that function should exist in the first place, whether it belongs in this module or another, whether the abstraction layer is appropriate, or whether the approach will scale to the requirements you are planning for next quarter. Architectural review requires understanding of the project roadmap, the team's capabilities, and trade-offs that live outside the codebase.

UX implications. A code change might be technically correct but produce a confusing user experience. An error message that says "ERR_INVALID_STATE" instead of "Please save your work before closing" is technically accurate but unhelpful. Claude reviews code, not user experience. It cannot tell you that the loading spinner appears in the wrong place or that the form validation fires too aggressively.

Subtle concurrency bugs. While Claude catches obvious race conditions (shared mutable state without synchronisation, classic TOCTOU patterns), it struggles with subtle concurrency issues that depend on specific timing, scheduler behaviour, or memory model guarantees. If your concurrent code requires reasoning about happens-before relationships or memory ordering, human review remains essential.

Integration behaviour. Claude reviews code in isolation. It does not run the code, does not make HTTP requests to dependent services, and does not verify that the mocked behaviour in tests matches the actual behaviour of external systems. Integration issues that only manifest when components interact in production are outside the scope of static code review, whether by AI or by humans.

Combining AI and Human Review

The optimal workflow is not AI review or human review. It is AI review then human review, with each handling different responsibilities.

Step 1: AI review runs automatically. When a developer opens a PR, the GitHub Actions workflow triggers and Claude posts its review within minutes. The developer reads the AI feedback and addresses any critical or warning findings before requesting human review.

Step 2: Developer addresses mechanical issues. The developer fixes the SQL injection pattern, adds the missing null check, resolves the naming inconsistency. These are concrete, unambiguous fixes that do not require discussion.

Step 3: Human reviewer focuses on higher-order concerns. By the time the human reviewer looks at the PR, the mechanical issues are already resolved. The human reviewer can focus entirely on architecture, business logic correctness, design decisions, test strategy, and whether the overall approach makes sense. This is a fundamentally different (and more valuable) review than the one they would do if they also had to catch every missing null check.

Step 4: Human reviewer verifies AI findings. Occasionally, Claude produces a false positive, flagging something as a security issue when it is actually safe due to context that Claude did not have. The human reviewer overrides these findings with an explanation. Over time, you can feed these overrides back into the review skill to reduce false positives.

This workflow has a practical benefit beyond code quality: it makes human review faster. When reviewers know that mechanical issues have been caught, they spend less time on line-by-line inspection and more time on the aspects of review that require human judgement. Review turnaround times decrease because the most tedious part of the work is already done.

For teams adopting this workflow, the review skill becomes the shared standard. New team members read the skill to understand what the team cares about. The skill evolves as the team learns what matters most for their codebase. Over time, it becomes a living document of the team's quality standards. The Claude Code daily workflows guide covers how to integrate this into your daily development rhythm alongside other Claude Code workflows.

Cost and ROI Analysis

AI code review has a cost, and understanding that cost is important for making an informed decision about adoption.

Per-review costs. The cost of an AI code review depends on the size of the diff and the model used. Here are typical numbers based on running reviews across several production repositories.

Per-PR Cost by Diff Size

| Diff Size | Lines Changed | Model | Approximate Cost |

|---|---|---|---|

| Small | 10-50 | Claude Sonnet | $0.02 to $0.05 |

| Medium | 50-200 | Claude Sonnet | $0.05 to $0.15 |

| Large | 200-500 | Claude Sonnet | $0.15 to $0.30 |

| Very Large | 500+ | Claude Sonnet | $0.30 to $0.50 |

Data source: Anthropic pricing and Claude Code Action docs, as of 2026-04. Permalink: systemprompt.io/guides/ai-code-review-claude#per-pr-cost-by-diff-size.

Using Claude Opus for complex reviews roughly doubles these figures. Using Haiku for style-only checks roughly halves them.

Monthly cost for a typical team. A team of 8 developers merging an average of 40 PRs per week, with an average diff size of 150 lines, spends approximately $16 to $48 per month on AI code review. That is less than a single hour of senior developer time.

ROI calculation. The value side is harder to quantify precisely, but the components are clear.

Time saved on mechanical review: if AI review handles 60% of the mechanical findings that human reviewers would otherwise need to catch, and the average human review takes 30 minutes, each review saves roughly 10 to 15 minutes. For 160 reviews per month, that is 26 to 40 hours of senior developer time redirected from mechanical review to higher-value work.

Bugs caught before merge: every bug caught in code review rather than production saves debugging time, hotfix time, and incident response time. A single production security incident can cost days of engineering time and reputational damage. AI review catching even one critical security issue per quarter pays for the entire annual cost of the tool.

Faster review turnaround: when reviews are faster, developers spend less time waiting and less time context-switching back to PRs they opened days ago. This is difficult to quantify but consistently reported as one of the most valuable benefits by teams running automated reviews.

Cost controls. If cost is a concern, apply the strategies from the workflow configuration section: filter by file path, review only on PR open (not every push), use Sonnet instead of Opus for routine reviews, and set concurrency limits to avoid redundant runs. These controls can reduce monthly costs by 40 to 60% with minimal impact on review quality.

For a full breakdown of Claude Code pricing across all use cases including reviews, the cost optimisation guide covers budgeting strategies, model selection, and usage monitoring.

The Lesson

The most important lesson from six months of running AI code review is that the review is only as good as the instructions you give it. A vague prompt like "review this code" produces vague, generic feedback that developers learn to ignore. A specific prompt that describes your security model, your performance requirements, your coding conventions, and your output format produces findings that developers actually act on.

The second lesson is that AI review changes team culture in unexpected ways. When every PR gets a consistent review within minutes, developers start writing cleaner code before they open the PR. They run the pipe-based review locally before pushing. They address the easy issues preemptively because they know the bot will flag them anyway. The feedback loop tightens, and code quality improves incrementally.

The third lesson is about trust calibration. When AI review is new, developers scrutinise every finding. As they gain experience, they learn which categories of findings are almost always correct (SQL injection, resource leaks, null checks) and which sometimes produce false positives (performance suggestions, naming conventions). This calibration takes a few weeks. Teams that skip this calibration phase and either blindly trust or blindly dismiss AI findings miss the value entirely.

Conclusion

AI code review with Claude Code is not a replacement for human code review. It is a layer that handles the mechanical, pattern-matching aspects of review so that human reviewers can focus on the things that actually require human intelligence: architecture, business logic, design trade-offs, and mentorship.

The setup is straightforward. Start with interactive reviews on your local machine to understand what Claude catches and how it communicates findings. Move to pipe-based reviews for speed. Create a review skill that encodes your team's standards. Then deploy the GitHub Actions workflow to make AI review automatic and universal.

The cost is negligible for most teams. The ROI is immediate. The ongoing maintenance is minimal, mostly updating your review skill as your codebase and standards evolve.

If you are already using Claude Code for daily development, adding code review is a natural extension. If you are new to Claude Code entirely, code review is one of the fastest ways to see concrete value from the tool. Either way, start with a single repository, run it for two weeks, and measure what it catches. The results speak for themselves.

For next steps, the GitHub Actions recipes guide covers additional CI/CD workflows beyond review, including issue-to-PR automation and test generation. The daily workflows guide covers how to integrate Claude Code into your broader development routine. And the cost optimisation guide helps you budget and control spending as you scale AI review across more repositories.