Download: Enterprise factsheet (PDF) — two-page brief for RFP and CISO review.

In April 2026, Anthropic opened Claude Cowork to third-party platforms. The announcement sat inside three support articles, short and technical. Their combined effect is large. For the first time, an enterprise AI client of Cowork's class accepts a documented, customer-operated control plane for inference, identity, audit, and plugin supply chain. The Cowork client a developer uses has not changed. What lives behind the /v1/messages endpoint can now be yours. Your gateway, your auth, your plugin manifest, your audit table. Claude Cowork plugins stop being a per-user install problem and start being a fleet-level supply-chain decision your security team actually controls.

This page is written for the people who have to decide whether this moves. A CTO drawing up the next three years of AI architecture. A CISO who has blocked Cowork rollout because there was no revocation seam. A Chief Architect told Cowork "isn't an option" for the regulated business unit. A head of procurement sitting with an RFP from the compliance officer that now asks about agentic AI specifically. We cover why a self-governed AI substrate matters at this moment, what Anthropic actually shipped, where the landscape will sit in 2030, how the five things the enterprise now owns fit together, how to roll out to a thousand users without a bad week, and what a Fortune 500 RFP actually wants to see.

Why Self-Governed AI Infrastructure Matters Now

Enterprise AI rolled out over the last five years with a hole in the middle. The hole is what this page is actually about.

Between 2021 and 2025, large organisations piloted AI tools at seat count and, for the most part, failed to scale them. The pattern is consistent enough that it has a shape. A company buys a seat licence for a vendor product. Five hundred engineers use it for six months. Cost per seat is palatable. Productivity is anecdotally positive. Then procurement audits, security reviews, and compliance mapping hit simultaneously, and the pilot either stays frozen at five hundred seats or gets shut down. The reasons are structural, not tactical.

Revocation

A vendor-hosted client authenticates through the vendor's identity surface. Revoking a single user's AI access means removing them from whatever account abstraction the vendor sells. That works for one user. It does not work when the revocation trigger is a leaver who also needs to keep their workstation running through a handover, or an employee whose role changed and should retain email access but not AI access, or an incident response requirement that a specific developer's AI usage is paused for two hours while a forensic question is answered. Enterprise HR systems already express these distinctions. Vendor SSO integrations flatten them.

A self-hosted gateway breaks the flattening. Revocation becomes a credential operation on the gateway. It does not touch SSO. The user retains mail, chat, code repositories, and deploy rights. They lose the AI gateway JWT, nothing else. That one change makes a long list of HR and incident-response procedures usable for AI access where they were not before.

Data residency

Every prompt that leaves a developer's laptop is data. A large fraction of it is data that was in scope for the last SOC 2 report, or the last ISO 27001 audit, or the last HIPAA security assessment. Vendor data-processing addenda describe where the content sits at rest under their control. That description is either acceptable or not to the compliance team, and if it is not, there is no configuration that makes it acceptable. The prompt is leaving the perimeter. A self-hosted gateway ends that conversation because the prompt no longer leaves.

For enterprises operating across multiple jurisdictions, the problem compounds. A German business unit has obligations under GDPR plus the German Federal Data Protection Act plus the sectoral rules for its industry. A US subsidiary has obligations under state-level data laws that differ by state. A UK subsidiary has its own post-Brexit variants. Pointing every user's AI calls at a single hosted endpoint forces the compliance team to argue all of these against one vendor contract. Pointing the gateway at a sovereign-cloud upstream per jurisdiction makes the argument local and auditable.

Cost visibility

Seat-based AI pricing presented to finance as a per-developer subscription implies a per-developer spend that can be budgeted. That implication fails in two directions. Developers who use AI heavily consume ten times the tokens of developers who use it lightly, but both pay the same seat fee. Departments that do cost allocation across engineering teams want to attribute AI spend the way they attribute cloud spend, which is usage-based. Vendor invoices do not decompose that way. The finance team gets one number. They cannot chart it. They cannot chargeback on it. They cannot forecast it at next year's headcount plan. They stop approving expansion.

With gateway-based architecture, cost visibility arrives in the audit table as an engineered field rather than through a separate invoice process. Finance can run any decomposition they want on cost_micro. The per-developer, per-department, per-provider, per-model breakdown is a single query each. The numbers tie back to the provider's invoice line for reconciliation. The same table answers "what is our AI cost this quarter" and "why did our AI cost rise in March". Most bundled vendor offerings have never been able to answer the second question with useful granularity.

Audit and traceability

An agent that invokes tools on a user's behalf is doing things the user did not type. An auditor asks what the agent did, and the honest answer before this opening was "we have some of it, in the vendor's dashboard, for as long as the retention window lasts". That answer does not survive a framework like the EU AI Act, which requires automatically generated logs for traceability, or HIPAA §164.312(b), which requires the covered entity to record and examine activity. Vendor dashboards ship per-seat audit in per-vendor schemas with per-vendor retention. They were not designed to underwrite a Fortune 500 compliance posture. They were designed to sell seats.

The NIST AI Risk Management Framework explicitly calls out the need for traceability of AI-assisted decisions across the model, the data, the user, and the deployment context. That traceability lives in a schema, not a dashboard. The trace_id column in audit_events is that schema. Every tool call, every MCP execution, every model response in the same conversation shares the trace ID. A single join reconstructs the decision chain. No vendor dashboard produces this view because no vendor owns the full chain. The enterprise does, and does only because the gateway is the enterprise's.

Governance at the request path

Governance sits underneath all four problems above. An organisation running AI without a policy gate in the request path is running AI without governance. A gate after the fact is post-hoc analysis, not enforcement. The gate has to live where the request passes, and the request passes through whatever endpoint the client is pointed at. While every enterprise AI client was pointed at the vendor's endpoint, the gate either lived in the vendor's product (in which case the enterprise did not own it) or did not exist (in which case nothing was enforced).

The enterprise had two options before April 2026. Wait for the vendor to ship enforcement the enterprise could configure. Build governance on top of the vendor's surface and hope the surface held still. Neither option worked at enterprise scale. Vendors ship features on their timelines, not an enterprise's compliance calendar. Building governance on a moving vendor surface created a maintenance burden that never stopped growing because the surface never stopped moving. Staff engineers at companies that tried this route spent meaningful calendar time in 2024 and 2025 rewriting internal AI tooling to accommodate new Anthropic beta headers, new tool-use semantics, new plugin architectures, new extended-thinking block shapes. The enterprise AI team became the enterprise AI integration team, and the integrations were never finished.

What Anthropic opened in April 2026 is the first documented seam that breaks this pattern. The client is the vendor's. The inference can be the vendor's or someone else's. Everything between them is the enterprise's. The endpoint, the auth, the audit, the policy, the supply chain all live on infrastructure the enterprise operates, under the enterprise's tooling discipline, on the enterprise's timeline. The hole in the middle closes. The pilots that were stuck can scale.

What changes for a Fortune 500 security posture

A CISO evaluating AI tooling in 2026 is holding a harder brief than a CISO in 2023. Three pressures compound. The EU AI Act rolled its general-purpose AI rules into effect in February 2025 and its high-risk rules in August 2026. US state-level AI regulation is moving through Colorado, Illinois, and New York with sectoral rules layering on top. The NAIC model bulletin on AI use in insurance, the OCC's guidance on model risk management, and the UK FCA's AI discussion papers all arrived in 2024 and early 2025. Sector regulators have started asking about agentic AI specifically, because the agent is doing things between the user's prompt and the user's result.

None of those frameworks were written against Claude Cowork. They were written against AI systems generally. A compliance officer mapping AI frameworks onto internal controls needs a substrate where the controls can attach. Self-hosted inference with JWT identity propagation and a typed audit schema provides that substrate. A vendor-hosted AI client with a dashboard does not.

The second pressure is insurance. Cyber insurance underwriters have started asking specific questions about AI use during renewal and quote cycles. "What AI tools are in use, by whom, with what oversight" is a question with two answers. Either the answer is an audit query the risk team can run in front of the underwriter, or the answer is a set of vendor dashboards with gaps the underwriter will price into the premium. Premiums are not theoretical. A typical Fortune 500 cyber programme is ten to thirty million dollars in annual premium, and AI visibility is actively being priced.

The third is procurement. A CTO buying AI at scale in 2026 is buying into the next five to seven years of an architecture. Any architecture whose basic shape was "single vendor, hosted client, per-seat invoice" in 2024 has aged poorly because the cost curve across providers has widened faster than any one vendor's roadmap predicted. A Sonnet-class call costs meaningfully different amounts at Bedrock, at Vertex, and at Anthropic direct for the same prompt on the same day, and the cheapest lane is not always the one the vendor's client defaults to. Locking the routing decision into the vendor's client was a reasonable bet when the providers were closely matched on price. It is no longer a reasonable bet.

Self-governed AI infrastructure solves all three pressures with the same architecture. A JWT-backed audit log with per-user, per-tool, per-policy-version rows is exactly what regulators, insurers, and risk teams want to see. Routing inference through a gateway that chooses upstream per call is exactly what finance wants to see. And a signed-manifest plugin supply chain with per-user role scoping is exactly what security wants to see when the question is "what does our AI actually do".

What Anthropic Actually Shipped

The April 2026 opening is three extension points, five managed-preference keys, and a credential-helper contract. Each of the three documents Anthropic published describes one slice. Read together they describe a complete architecture.

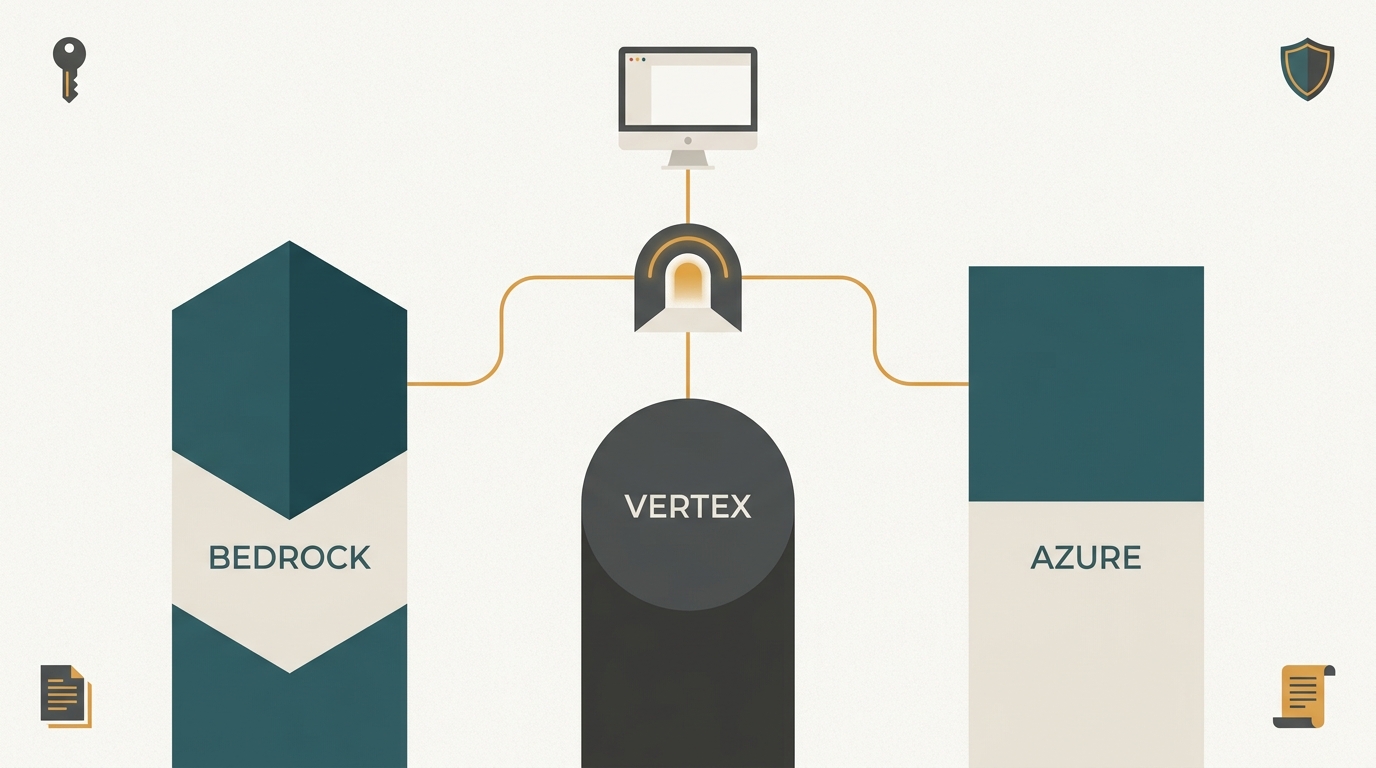

The /v1/messages gateway

Set inferenceProvider to gateway in managed preferences, set inferenceGatewayBaseUrl to a URL the fleet can reach, and Cowork sends every chat turn, every tool call, and every extended-thinking block to that URL. The wire format is the Anthropic Messages API. Anything that speaks the Messages shape on the server side works. Anthropic direct works. Amazon Bedrock works because Bedrock's Claude endpoint preserves the Messages wire format. A gateway that converts Messages on the way in and OpenAI Chat Completions on the way out can point Cowork at Azure OpenAI, Google Vertex, or a self-hosted vLLM cluster serving Llama or Qwen. The client does not know or care which upstream served a given call. It renders the streamed response the same way it renders an api.anthropic.com response today.

Inbound authentication on that gateway is enterprise-owned. Cowork attaches a bearer token the credential helper minted, plus the seven identity headers Anthropic defines for a third-party deployment. The gateway verifies the token, applies whatever governance the enterprise runs (scope checks, secret scanning, blocklists, rate limits), resolves a route, forwards to the upstream, and streams the response. The upstream never sees the identity headers because the gateway strips them at the outbound boundary. That separation is what lets the enterprise share a single Bedrock or Vertex account across thousands of users while preserving per-user auditability inside the perimeter.

The managed MCP allowlist

Cowork reads a JSON file from a fixed path inside the org-plugins mount to decide which MCP servers a user may invoke. Servers that appear in the file are visible in the UI and callable at runtime. Servers that do not appear are hidden and refused. The file is signed by the same Ed25519 key that signs the manifest and replaced atomically on every sync, so an employee cannot whitelist an MCP server without central approval. For a regulated environment that has been blocking agentic tool use because the agent can reach arbitrary MCP servers, the managed allowlist is the primitive that unblocks rollout. You can ship agents that call exactly the MCP servers an auditor has approved, and nothing else.

The org-plugins mount

A directory at a system-scope path that Cowork scans on launch and on refresh. Any plugin inside the directory appears in the slash menu, the sidebar, and the agent runtime. The path differs by platform. On macOS the mount sits at /Library/Application Support/Claude/org-plugins/. On Windows it sits at C:\ProgramData\Claude\org-plugins\. The credential-helper binary owns the directory. End users have no write access. Plugin files land through the signed-manifest sync, not through individual installs. When the manifest publishes a new version, the next sync on each device updates the files atomically. When it publishes a revocation, the next sync removes the revoked artefact. The user never ran an installer and does not need to run an uninstaller.

The five managed-preference keys

Cowork reads the same five keys on every platform. The transport differs. On macOS they live under the com.anthropic.claudefordesktop managed-preference domain, pushed by a .mobileconfig profile. On Windows they live under HKEY_CURRENT_USER\SOFTWARE\Policies\Claude, pushed by Intune Custom OMA-URI or Group Policy ADMX. On Linux they live in CLAUDE_* environment variables written by Ansible, Puppet, or Nix. The key names are fixed. inferenceProvider switches routing from api.anthropic.com to the gateway. inferenceGatewayBaseUrl names the gateway host, which must be HTTPS unless it resolves to 127.0.0.1. inferenceCredentialHelper is the absolute path of the binary Cowork invokes on every call to mint a JWT. inferenceCredentialHelperTtlSec is how long a minted JWT stays cached, advisory on the client, capped by whatever the gateway returns in its response. inferenceGatewayAuthScheme is the HTTP auth scheme used on outbound calls, bearer today.

Those five keys decide where inference goes and how the client authenticates. They live outside the Cowork UI, in the OS-level policy store, which is the detail that matters. An employee cannot turn the gateway off from inside Cowork. An attacker with user-scope privileges on an endpoint cannot change them either. The governance decision sits where your endpoint team already operates, not inside an application the user controls.

The credential-helper contract

inferenceCredentialHelper names a subprocess Cowork spawns on every /v1/messages call. The contract is uncompromising. The binary reads arguments on stdin, does whatever auth dance it needs to do, and prints exactly one JSON line to stdout. One line. Not two, not zero, not a log banner before the JSON, not a trailing newline followed by diagnostic text. Any deviation breaks the parser inside Cowork and the UI surfaces "credential helper failed". Diagnostic output goes to stderr.

The JSON carries three fields. token is the bearer token the gateway will verify. ttl is the seconds remaining before the token expires. headers is a map of seven identity headers Cowork attaches to the outbound call verbatim. Those seven cover user identity, session identity, per-call trace identity, client identity, tenant identity, policy version, and call source. They are what ties a prompt in a conversation window to a row in the audit log, to a tool call, to an MCP exec, and eventually to a microdollar cost attributable to one developer in one department on one laptop.

Cache semantics are inside the helper, not Cowork. A helper that finds a valid JWT inside its TTL window returns the cached JSON without making a network call. When the TTL expires, the helper walks whatever authentication chain it supports (mTLS first for device-bound enterprise deployments, session cookie second for IdP-backed rollouts, personal access token third for pilots or small teams) and mints a fresh token against the gateway. Cache invalidation reduces to deleting the cache file. The helper re-authenticates on the next call.

The signed manifest

Plugins, skills, agents, and the managed MCP allowlist all ship through one JSON document served at /v1/cowork/manifest and signed with an Ed25519 private key held by the enterprise. RFC 8032 specifies the algorithm. The pubkey is pinned on every client at install time. Signature verification runs before the binary touches the filesystem. A manifest that fails verification aborts the sync before a single byte lands in the org-plugins mount. A manifest whose plugin files do not match their declared SHA-256 hashes aborts the sync at the staging step, equally without touching the live mount.

The manifest is per-user. The gateway resolves it server-side from the JWT claim set, so a finance engineer and a platform engineer at the same company can receive materially different plugin catalogues from the same manifest publish. The document itself enumerates what each user sees. A plugins array with file-level SHA-256 pinning. A skills array with instruction bodies. An agents array binding skills to models and MCP servers. A managed_mcp_servers array that becomes the allowlist. A revocations array that acts as the kill switch for artefacts that turn out to carry a bug or a leaked credential.

That is the surface. Three extension points, five keys, one credential helper, one signed manifest. Between them, they describe how an enterprise takes full ownership of what used to be a vendor service.

The Next Five Years

Any architecture decision an enterprise makes in 2026 has to survive 2030. The interesting exercise is to write down what 2030 probably looks like and then ask which architectures age well against that forecast. I cannot prove the forecast, but I can describe where the observable signals point.

The model layer keeps broadening

Opus-class, Sonnet-class, and Haiku-class capability is available in 2026 from multiple providers. The cost curve has widened. The quality gap for routine inference has narrowed. That pattern has no mechanism for reversing. The specialised frontier capability (whatever capability a single lab held briefly) resolves into a cross-provider tier within eighteen to twenty-four months of first launch. In 2028 there will be a model tier that only one lab serves, and in 2030 that tier will be three providers wide. Exact names of the models do not matter. The shape of the distribution does.

An enterprise building in 2026 around one hosted endpoint is implicitly betting that the lab it picked will stay at the frontier for the contract duration, that the cost curve will not move faster than the lab's own price schedule, and that no other lab will emit an integration surface that makes switching attractive. Those three assumptions were safer in 2023 than they are today. In 2030 they will be unsafe enough that security architects who signed them in 2026 will be explaining them to their successors.

An enterprise building in 2026 around a gateway that picks the upstream per call is betting something different. That the request shape will remain close to Anthropic's Messages wire format, that upstream providers will continue to speak that shape or be accessible through a conversion, and that routing decisions will compound over time as data about what each call actually costs builds up in the enterprise's own database. Those three are much safer bets. The Messages shape is broadly compatible across providers today. Conversion layers for OpenAI-compatible upstreams are shipping. The compounding value of per-call routing data is visible in any cloud workload that has been through two hardware refresh cycles.

Agent workloads outgrow human-latency workloads

A human talking to Cowork produces a few calls per minute. An agent in a loop produces a few calls per second. Over five years, the ratio of agent calls to human calls in a typical engineering organisation moves from roughly one-to-one to roughly ten-to-one. Specific numbers are speculative, but the direction is not. Scheduled agents, long-running background tasks, CI integrations, code-review assistants, and customer-support copilots all push call volume up without adding new seats.

Seat-based AI pricing loses coherence at this point. The seat is not the unit the cost scales on. The unit is the agent, and the agent runs while the seat is offline. Enterprises will push for usage-based billing, and vendors will resist, and the resolution will be that large enterprises negotiate capacity contracts that look a lot like Bedrock commitments today. A gateway architecture absorbs this transition without an architecture change. Seat-based architectures do not.

Agent workloads also change the compliance conversation. A human user typing a prompt is a user action. An agent invoking a tool is a system action that happens to be user-attributable. The audit schema has to capture both, because the regulator's question "who did this" is asked on any system action, not only ones where a human typed. audit_events rows with per-call trace IDs and explicit call-source columns answer this. Vendor dashboards typically do not, because vendors do not have direct visibility into agent runtime state on a customer's endpoint.

Identity moves from SSO to per-device, per-request

Enterprise identity in 2026 is centred on SSO. An employee logs into their laptop, SSO gives them a session, the session carries across applications. That model was designed for a world where applications ran in the browser and identity was a user claim. Agentic AI challenges this. An agent running on the developer's laptop at 2 a.m. invoking a tool call is either the user, or the agent, or both, and the distinction matters. An auditor who finds a destructive action in a log wants to know whether the user was at the keyboard.

Per-device identity, carried by an mTLS client certificate provisioned through MDM and backed by TPM on Windows or Secure Enclave on macOS, is how this question gets answered. The certificate pins the action to a device. The JWT scoped by the certificate pins the action to a session. The audit row pins the action to a trace ID. Chain of custody from prompt through tool through MCP server ends up in one SQL query. This is not the default in 2026. It is the default in 2030. Enterprises that start with PAT and session tiers in 2026 and move to mTLS as the regulated business units expand will have a three-year head start on the compliance teams that wait.

Regulation moves from principles to specifics

The first wave of AI regulation (EU AI Act, state-level US laws, the NIST AI RMF) is principle-heavy. Risk management. Transparency. Human oversight. The second wave, arriving through 2027 and 2028, is specifics. Sectoral rules that say exactly what needs to be logged, exactly how long, exactly what evidence needs to be produced in a supervisory examination. The FCA, the OCC, the PRA, the German BaFin, and the ECB are all pre-positioning for this. Insurance regulators are further ahead in some jurisdictions.

The specifics will be schema-shaped. "The system shall maintain a record of each AI-assisted decision including the model, the provider, the policy in force at the time, the user identifier, and the tool calls invoked on the user's behalf." That is a sentence you can write today from any of a dozen draft sectoral rules. An architecture where those fields are already in the primary key of an audit row is ready for the rule. An architecture where those fields have to be reconstructed from vendor dashboards during an audit is not.

Compute localisation becomes routine

In 2026, most enterprise AI inference happens in a small number of hyperscaler regions. By 2030, sovereign-cloud and sector-specific compute requirements will fragment this. The French DGFiP, the German Federal Office for Information Security, and several US states have already signalled that certain workloads must run on in-country or in-sector compute. Sovereign cloud offerings from AWS, Microsoft, Google, and domestic providers will receive targeted AI contracts. The enterprise that wants to serve multiple sovereign environments from one AI architecture needs a routing primitive that picks the upstream per call based on the user's jurisdiction claim.

A gateway with per-JWT-claim routing already does this. A vendor-hosted client does not, because the vendor cannot know your sovereignty rules before they hit their endpoint. Enterprises with strong EU presence, strong regulated-sector presence, or strong US state-government contracts will operate multi-sovereign AI by 2028, and the architecture that makes that one config change rather than one client migration will have compounding time-to-deploy advantage.

The result

The enterprise AI architecture that ages well from 2026 to 2030 has four properties. The client is provider-agnostic, because the model-tier leader changes. The identity layer is per-device and per-user, because regulators are moving that way. The audit layer is schema-owned and queryable, because supervision is becoming specific. The routing layer picks the upstream per call, because cost, sovereignty, and availability all vary across providers. Cowork pointed at a gateway you own satisfies all four. Cowork pointed at api.anthropic.com satisfies one.

This is not a prediction that Anthropic loses. It is a prediction that Anthropic keeps the client, which is the most valuable thing on a developer's laptop, and that the infrastructure underneath becomes plural. The opening they shipped in April 2026 is consistent with that endgame. It is not consistent with a single-provider endgame, which is why it matters.

What Self-Governed Cowork Actually Gives You

Five capabilities move from vendor to enterprise when Cowork points at your gateway. Each is a platform problem the enterprise already knows how to solve for a dozen other systems. None of them is a feature.

Inference

Every /v1/messages call lands on your gateway. From there, it routes to whichever upstream the policy picks. Anthropic direct, Amazon Bedrock, Google Vertex, Azure AI Foundry, OpenAI, Google Gemini, Groq, a self-hosted vLLM or Ollama cluster inside the perimeter, or a mix. Routes match on the model name, the agent identifier, the department claim on the JWT, the user identifier, the request cost estimate, or a failover order. A team in the regulated business unit can pin to an on-prem Llama cluster while the engineering group routes to Bedrock us-east-1. A finance team in Frankfurt can route to Bedrock eu-central-1 for data residency while a sibling team in London routes to Bedrock eu-west-2 for latency. The same Cowork client serves all of them. Moving the whole fleet onto a cheaper provider is a YAML edit and a SIGHUP. Moving a single department is a one-line route condition.

The routing config is small and declarative. A few dozen lines of YAML describe the full rule set, referencing an existing secrets file for upstream API keys. No key material ends up in the config itself. Rotation of an upstream key is a secrets-file edit. The gateway re-reads on signal and swaps keys without dropping connections.

What this actually buys you is control over the two variables that matter for AI cost and compliance. Where the inference runs, which drives cost and residency, and which model served the call, which drives quality and latency. Both are gateway decisions. Neither is a vendor decision, and neither requires a contract renegotiation to change.

Identity

Cowork has no identity of its own in gateway mode. Every /v1/messages call carries a JWT the gateway mints, scoped to (UserId, SessionId, ClientId, TenantId) and signed with the enterprise signing key. The payload also carries the policy version in force at mint time, which is how you prove to an auditor which governance rules applied to a given request later. The gateway verifies the token on every call. A revoked token fails verification and the request returns 401 before it can reach any upstream.

Three authentication tiers stack as a capability ladder. PAT auth works for pilot cohorts. The admin mints a token at the gateway, the user runs the helper binary's login command once with the token, and the token lives in the OS keystore (Keychain on macOS, Credential Manager on Windows, Secret Service on Linux). PAT is simple and appropriate for a team of fifty volunteers who want a two-week trial.

Session auth works for general rollout. The helper opens the enterprise IdP's OAuth URL in the user's browser, the user authenticates normally, the browser returns the auth code to a loopback server on the helper, and the session cookie lands in the OS keystore. The JWT minted from the session respects the TTL set in managed preferences. Session auth gives you SSO-aligned AI access without the brittleness of a long-lived static token.

mTLS auth is the regulated-business-unit tier. The helper reads a client certificate from an OS keystore reference (Keychain label on macOS, certificate-store label on Windows, PKCS#11 URI on Linux), presents it to the gateway's mTLS endpoint, and receives a JWT carrying the certificate's SHA-256 fingerprint as a claim. The certificate is provisioned at device-enrolment time by the MDM, backed by TPM on Windows or Secure Enclave on macOS, and rotated on whatever cycle the corporate PKI runs. Revocation is a CRL entry. The next /v1/auth/cowork/mtls call returns 403 and the client falls back to a sign-in prompt.

Tier choice is not uniform across the fleet. Pilot teams typically run PAT. General rollout runs session. Regulated units run mTLS. All three can coexist because the gateway's capability probe advertises which tiers a given cohort accepts, and the helper walks the ladder, selecting the strongest available.

Audit

Every call writes one row to audit_events before the response returns to the caller. The schema has explicit columns for the seven identity headers, plus model name, provider name, token counts in and out, microdollar cost, latency, outcome (allowed, denied, or error), and a JSON payload holding redacted prompt and response content plus any tool-call metadata. Indexes on (tenant_id, occurred_at DESC), (trace_id), and (user_id, occurred_at DESC) cover the queries a compliance team or a SOC analyst actually runs.

The trace_id column is the one that makes the whole thing work. Every tool call the model triggers inherits the parent call's trace ID. Every MCP execution that results from a tool call inherits it again. One SQL join on trace_id returns the full lineage of any user action. The prompt, the model that served it, every tool the model chose to invoke, every MCP server it reached, token counts, microdollar cost, outcome for each step, and the policy version in force at mint time. "What did jane@example.com do with Cowork between 14:00 and 16:00 last Tuesday" becomes a one-line query.

SIEM export runs in parallel. Every audit row ships as a JSON event on a separate topic ingested by Splunk, ELK, Datadog, or Sumo Logic. The JSON shape mirrors the SQL row. Teams that already run a SIEM usually prefer the JSON path for real-time monitoring. Teams that want ad-hoc querying prefer the SQL path. Both are live simultaneously.

Cost attribution follows from the schema without additional machinery. SUM(cost_micro) GROUP BY user_id is per-developer spend. GROUP BY (tenant_id, provider) is cross-provider comparison. GROUP BY (tenant_id, payload ->> 'department') is per-department, assuming the gateway stamps the department claim into the payload. Finance gets a spreadsheet with real numbers. Outliers get conversations with their tech leads.

Extension supply chain

The supply chain for Claude Cowork plugins, skills, and managed MCP servers is the signed manifest. One document, Ed25519-signed, served from the gateway, resolved per-user from the JWT. The plugin files themselves are SHA-256 pinned inside the manifest, so swapping a file byte-for-byte on the gateway and pushing it without updating the manifest fails verification on every client simultaneously. The binary stages files under org-plugins/.staging/ before atomic rename, so a partial download or a mismatched hash never produces a half-installed plugin visible to Cowork.

The manifest carries revocations as first-class entries. Publishing a new manifest with revocations: [{"kind": "plugin", "id": "offending-plugin"}] causes every fleet client to remove the offending artefact atomically on its next sync. For instant revocation, push the new manifest through MDM in parallel with the gateway publish. The SOC sees the revocation landing in audit_events within the same minute.

Per-user scoping is the detail that unlocks catalogue curation at enterprise scale. The manifest resolver at the gateway reads the JWT claim set and produces a manifest specific to that user. Finance engineers see finance plugins. Platform engineers see platform plugins. Security engineers see the subset of MCP servers security has approved. A single manifest publish can move three different populations onto three different catalogues in one operation. Audit evidence is the manifest version ID recorded in each user's next sync-status file.

The OWASP Agentic A5 risk (compromised extensions or plugins) stops being a risk you mitigate with controls and starts being an attack surface you do not expose. A plugin cannot appear on a user's machine without surviving signature verification, hash verification, and atomic rename. An attacker who gets code onto the gateway without the signing key's cooperation still cannot deliver it to clients.

Fleet

Cowork runs on laptops the endpoint team already manages. The five managed-preference keys push through Intune, Jamf, and Group Policy, the same consoles that carry every other endpoint policy. An MDM push retargets the whole fleet at a new gateway, a new credential helper, or a new auth tier. There is no separate AI deployment tool to operate, no separate agent to run, and no separate service to install on the endpoint beyond the credential-helper binary.

Three teams split the responsibility. The AI platform team owns the routing config, which is where model choices, upstream selection, and per-department routing rules live. The security team owns the signing key and the manifest catalogue, which is where plugin curation, MCP allowlist membership, and revocations live. The endpoint team owns the MDM profile, which is where the five keys go. The interfaces between the three teams are narrow and documented. An AI platform team that wants to retarget the fleet to a new gateway hands the endpoint team a new URL. An endpoint team that wants to roll out an mTLS tier hands the security team a list of devices for certificate provisioning. A security team that wants to ship a new plugin hands the AI platform team the signed manifest URL.

No new org function is required. Any enterprise large enough to run Cowork at fleet scale already has three teams fitting these shapes for other systems. The Cowork rollout slots into the existing interfaces.

Deploying Safely to One Thousand or More Users

The answer to "how do I roll Cowork out to a thousand developers without a bad week" is the answer to "how does this enterprise normally roll out anything to a thousand developers". The architecture does not invent new rollout primitives. It inherits the ones you already use. What it does is make the boundary between what the vendor controls and what you control clean enough that your existing primitives apply.

This section walks the full rollout in the order you will actually run it.

Shape the three teams before the first commit

Before the gateway stands up, assign ownership. The AI platform team owns the gateway service and the routing config. The security team owns the Ed25519 signing key, the HSM or KMS that holds it, the manifest catalogue, and the MCP allowlist. The endpoint team owns the MDM profile and the five managed-preference keys. Name a single escalation contact in each. Put their contact details in the runbook before the pilot starts, not during the first incident.

Agree on the audit retention policy before the first call flows. The audit_events table grows linearly with call volume. At a realistic rate (one developer, fifty calls a day) the table grows a few gigabytes per thousand developers per month for the hot payload. Decide now whether the compliance retention window is six months, eighteen months, or longer, and whether rows older than a hot window move to a cheaper archive tier. Both choices work. Indecision does not work because you will end up running SIEM export in production without a tested archive path.

Pick the SIEM destination before the gateway publishes its first event. Every serious enterprise has a target. Confirm the event shape, the topic, and the retention with the SOC before traffic starts. Changing SIEM integration later is easy. Changing the event shape after six months of data is retained is not.

Stand up the gateway in staging

The gateway is a service. Run it the way you run other tier-1 internal services. Two availability zones. A load balancer in front. Health checks against the /v1/auth/cowork/capabilities endpoint, which is unauthenticated and returns a small JSON document fast. Certificate rotation through ACM or cert-manager. Service accounts for secrets access. Logging into the standard platform. Metrics into the standard platform.

A Postgres instance holds audit_events and the other governance tables. A read replica feeds the SIEM export. An archive job moves rows older than the hot window to cheaper storage. Standard database operations.

A secrets file holds the upstream API keys for every provider the gateway will route to. Each key has a name. The gateway references names in the routing config, not key material. Rotation is a secrets-file edit.

Run a soak test. Point a synthetic client at the staging gateway with realistic call shape (roughly two hundred token input, roughly eight hundred token output, SSE streaming, one tool call in every third request) for an hour. Confirm end-to-end latency is within your tolerance, that the audit table is writing, that the SIEM is receiving, and that the load balancer health checks stay green under load. If any of these fails in staging, the pilot does not start.

Cut the pilot

The pilot is eight to twelve volunteer engineers. Pick them from a team that already reports to the AI platform team or the security team, so internal escalation is fast. Deploy sp-cowork-auth to their laptops (or whichever credential helper you run) on a local install, not via MDM yet. Mint a PAT for each pilot user. Route all pilot traffic to Anthropic direct as the upstream. No plugins in the manifest yet. No agents. No MCP allowlist yet. The pilot exists to prove the pipe works end-to-end.

Pilot exit criteria are specific. Every pilot user has at least five chat turns in audit_events. Median latency overhead of the gateway versus direct Anthropic is less than two hundred milliseconds. Zero outcome = 'error' rows from the pilot that are not explained. At least one revocation drill done and confirmed (revoke one pilot user's PAT at the gateway, confirm their next call returns 401, confirm an outcome = 'denied' row lands). If any of those fails, the pilot extends. If all pass, you advance.

First ring

The first ring is one full department. Fifty to one hundred fifty engineers. MDM profile pushed through Intune and Jamf. The credential helper installed on every device through the normal Win32 LOB app pattern on Windows and a Jamf policy on macOS. Session-cookie auth tier enabled. Signed manifest live with one internal plugin the department actually wants. An MCP allowlist containing exactly the servers the security team has approved, which is zero or one to begin with.

Rollout is gated on audit signal, not calendar. The first-ring exit gate is a list. Per-user latency P95 under one second for the gateway leg. Error rate under 0.5% across the ring. Every user in the ring has at least one successful sync of the signed manifest, visible as a fresh last-sync.json on every device through an MDM query. SIEM is ingesting at production volume without dropped events. Compliance has reviewed a sample lineage query from the ring and signed it off as SOC 2 CC7.2 evidence.

Watching audit_events during the first ring is the thing that makes this rollout safe. Three queries run on a weekly cadence.

-- Outcome distribution for the past week.

-- Spike in denied or error triggers a rollout hold.

SELECT outcome, count(*) AS row_count

FROM audit_events

WHERE tenant_id = 'org_acme'

AND occurred_at >= now() - interval '7 days'

GROUP BY outcome;

-- Top users by call count in the past day.

-- Outliers are either heavy users or runaway agents.

SELECT user_id, count(*) AS calls_24h

FROM audit_events

WHERE occurred_at >= now() - interval '1 day'

GROUP BY user_id

ORDER BY calls_24h DESC

LIMIT 20;

-- Cost by upstream provider for the past week.

-- Reveals where the money is going and which routes hold.

SELECT provider, round(sum(cost_micro) / 1e6, 2) AS cost_usd

FROM audit_events

WHERE occurred_at >= now() - interval '7 days'

GROUP BY provider

ORDER BY cost_usd DESC;

Any result that deviates from baseline expectations triggers investigation before the next ring starts. A sudden spike in denied rows usually indicates revocation in progress or a policy rule rolling out. A sudden spike in error rows usually indicates a gateway misconfiguration or an upstream degradation. A single-user call-count outlier usually indicates a runaway agent loop, which the gateway rate-limiter will catch in parallel.

Subsequent rings

Rings two and three are additional departments. Add an mTLS tier for any department that needs it (regulated business units, executive teams, finance). Provision the device certificates through the MDM's existing PKI connector. Add the tier to the gateway's capability probe. The helper picks mTLS if a certificate is present and falls back to session if it is not.

Enable a second upstream on the gateway. Route one model family to Bedrock, Vertex, or Azure OpenAI. Watch provider distribution in audit_events during the first day of the cutover. Latency, error rate, and cost all need to land in range.

Introduce the first real agent into the manifest. Binding a skill, a model, and a specific MCP allowlist. The agent's calls show up in audit_events with call_source = 'subagent'. The SOC reviews the first week of subagent traffic as a lineage sample.

Final ring and decommissioning

The last ring covers the remainder of the fleet. Often this is an opt-in phase where individual users who were missed pick up the MDM profile through their manager. At this point, every MDM-enrolled laptop in the organisation is pointing at the gateway.

Revoke direct API access at the Anthropic side. Individual OAuth tokens that were used during the unmanaged period are invalidated. New Cowork sign-ins that slip past the gateway (through a personal account, an untagged device, a third-party laptop on the guest network) are visible and triageable through egress monitoring.

Produce the compliance evidence pack. The table in the next section lists which framework controls it satisfies. Walk it through the internal audit team. Walk it through the external auditor on the next interim review.

Revocation drills

A rollout without revocation drills is not a rollout. The drill is simple. Pick a user at random. Revoke their PAT, session, or device certificate at the gateway. Watch their next audit_events row. Confirm outcome = 'denied' and the revocation credential ID in the payload. Restore their access. Document the median time from revocation command to first denied row.

Do this drill at pilot, at first ring, at second ring, at final ring. Record the median each time. The number is what you hand to the compliance team when they ask about emergency revocation capability. Most programmes see a median under sixty seconds. The TTL window governs the worst case.

Incident response

One new incident class emerges with AI rollout. A user reports a prompt or completion that should not have been generated. The response path runs through three queries and one patch. The first query is the trace_id the user reports (Cowork exposes it in the UI). The second is the lineage join on that trace ID, which shows what the model did, what tools it called, what the policy was. The third is the policy_ver column, which shows which governance bundle was in force. The patch is a new governance rule, a new manifest with a revocation, or an upstream route change. All three are operations the AI platform team runs in minutes.

A second new class is the runaway agent. The symptom is a spike in audit_events.count for a single user in a short window. The response is a rate-limit rule at the gateway scoped to that user or that agent, pushed in a config reload. No endpoint changes. The agent's next batch of calls hits the new rate limit and backs off.

The 1,000-seat stable state

At steady state after rollout completes, the rhythm is quiet. The endpoint team pushes MDM updates during monthly maintenance windows. The AI platform team publishes routing and manifest changes through the normal deployment pipeline. The security team rotates signing keys on the schedule the PKI programme already uses. The SOC runs audit_events queries on the same cadence they run queries against every other enterprise log.

Day-two AI operations reduce to catalogue curation (which plugins, skills, and MCP servers the manifest ships) and cost tuning (which upstream handles which model family). Both are small workloads. Most weeks they produce zero config changes. The value of the architecture is that the weeks where they produce many changes (a new regulation, a vendor deprecation, a cost renegotiation) do not require a client-side rollout. They require a config change.

Integration With Your Existing Enterprise Stack

An AI rollout that ignores the rest of the enterprise stack produces two things. A silo that compliance will flag. A support load that nobody scheduled for. The architecture above assumes integration into what the enterprise already runs. The specifics are worth walking through because most of the value only shows up when the integration is tight.

SIEM and SOC tooling

The audit_events table in Postgres is one half of the observability story. The SIEM is the other half. Every row written to audit_events emits as a structured JSON event on a topic the SIEM ingests. Splunk via HEC, ELK via Logstash, Datadog via their API, and Sumo Logic via hosted collectors all work because the event shape is plain JSON with consistent field names.

What to do with those events matters more than the ingest mechanism. A SOC analyst hunting for AI-specific threats wants dashboards that map onto what can actually go wrong. High-confidence patterns to alert on include a sudden spike in outcome = 'denied' rows for a single user (indicates revocation in progress or compromised credentials), a surge in cost_micro from one user in a short window (runaway agent), a new provider showing up that is not in the allowed list (routing config drift), and any policy_ver value that does not match the current published bundle (client ran against a stale policy).

Dashboards to build once the data is flowing include per-department AI spend trending week-over-week, per-agent error-rate correlated with model version, and a lineage explorer that takes a trace_id and renders the full call tree. The first two drive finance and platform conversations. The third is what the SOC uses during incident response, and it saves hours during the first real AI-related incident.

ITSM and change management

Most enterprises run ServiceNow, Jira Service Management, or an equivalent ITSM platform for change tickets. AI rollout plugs in through three tickets types. A routing-config change ticket documents which upstream the gateway forwards to for a given model family, and who approved the change. A manifest-publish ticket documents which plugins, skills, and MCP servers went live, who approved them, and which signing key signed the manifest. An MDM push ticket documents which profiles went to which device groups and on which schedule. All three ticket types map directly onto your existing CAB approval flow. Nothing new is introduced.

For production operations, adding AI-specific incident templates reduces time-to-resolution. Three templates cover ninety percent of incidents. A prompt-or-completion-out-of-policy template pulls trace_id from the user and runs a lineage query against audit_events. A runaway-agent template identifies the user and agent, applies a rate limit, and logs the intervention. A gateway-degradation template pulls latency metrics, failover state, and active route, and routes to the on-call engineer for the gateway service.

Identity and access management

SSO integration with the gateway follows the same pattern as any other enterprise HTTP service. OIDC or SAML 2.0 federation to the enterprise IdP. Claims mapping translates IdP attributes into JWT claims the gateway embeds. Department, role, cost centre, and regulatory-population flags come through as claims and drive routing and manifest resolution.

Device identity through mTLS integrates with the existing PKI. For Intune-managed Windows fleets, the Intune Certificate Connector issues device certs and binds them to TPM. For Jamf-managed macOS fleets, SCEP profiles issue certs backed by Secure Enclave. For Linux developer workstations, Smallstep or Vault PKI provides device certs backed by PKCS#11 tokens. The gateway does not prescribe a PKI. It reads the certificate the client presents, verifies the chain, and mints a JWT that carries the cert fingerprint as a claim.

Privileged access management integrates through specific role claims. Break-glass accounts get a claim that the gateway recognises and that causes every call on the account to write a higher-retention audit row flagged for SOC review. This mirrors the existing PAM pattern for production-database access or kernel-level operations.

Cost and FinOps tooling

FinOps teams that run Cloudability, Apptio, or an equivalent cost platform want AI cost on the same dashboards as cloud cost. The cost_micro column exports as structured cost data with user, department, and provider tags. A nightly ETL into the FinOps platform makes AI cost visible in every internal cost report. Chargeback and showback policies apply without modification. A department that already gets a monthly cloud bill gets an AI line on the same bill.

For reserved-capacity optimisation, the FinOps team wants call-volume forecasts at the provider level. The audit_events.occurred_at plus provider columns give them weekly and monthly call distributions per upstream. That feeds into Bedrock reserved-throughput decisions, Vertex committed-use decisions, and Azure MACC negotiations. Without the audit data, these decisions are guesses. With it, they are informed negotiations.

Human-in-the-loop and agent oversight

The OWASP Agentic AI Top-10 includes human-oversight-bypass as a concrete risk. The architecture addresses it through explicit call-source flagging and policy-bundle versioning. Every call carries x-call-source, which is set to cowork for user-initiated prompts, subagent for agent tool calls, and job for scheduled automation. The policy bundle encodes which sources are permitted for which actions. A destructive tool call with call_source = 'subagent' can be blocked by the governance pipeline while the same call with call_source = 'cowork' is allowed, because a human typed it.

Approval workflows extend through a second MCP server pattern. A skill that needs human approval calls an approval MCP server that posts to a Slack or Teams channel, waits for a reply, and returns the decision to the agent. The governance pipeline logs every such call. Auditors can prove that a human was consulted before a specific agent action through a single query that filters for the approval-server MCP-exec rows keyed by the action's trace_id.

For regulated populations (finance, trading, compliance, clinical), the policy bundle can require explicit approval for any subagent action that touches named MCP servers or named tools. The policy runs before dispatch, so the agent never reaches the tool without the approval. The audit evidence is deterministic.

Compliance and Governance Mapping

Compliance teams ask the same questions in different frameworks. The architecture answers them once. The table below maps twenty common controls an enterprise AI programme reports against onto specific gateway features and concrete audit artefacts. Every row is derived from primary-source framework text. Use the table as the skeleton of the evidence pack.

| Framework and Control | Control Intent | Gateway Feature | Audit Evidence |

|---|---|---|---|

| SOC 2 CC6.1 (logical access) | Restrict access to information assets | JWT minted per call, PAT/session/mTLS tiers, revocation within one TTL window | audit_events.user_id plus revocation log, denied-call row after revocation |

| SOC 2 CC7.2 (system monitoring) | Monitor system components for anomalies | Every call writes an audit row before response, SIEM export on same trace | audit_events row count vs expected, SIEM alert rule |

| SOC 2 CC8.1 (change management) | Changes authorised and documented | Signed-manifest publish pipeline, versioned routing YAML, MDM change log | Manifest version diff plus signing KMS log, routing YAML git blame |

| ISO/IEC 27001 A.5.15 (access control) | Rules and authority established | Per-user JWT claim set resolved at gateway, role-scoped manifest | Claim resolver log plus manifest diff per role |

| ISO/IEC 27001 A.8.3 (information access restriction) | Restrict access per business need | Per-user manifest, managed MCP allowlist, role-scoped plugin catalogue | Manifest version diff, managed_mcp_servers snapshot at evidence date |

| ISO/IEC 27001 A.8.25 (secure development lifecycle) | Controls on artefacts the system executes | Ed25519 manifest signing per RFC 8032, SHA-256 per-file pinning, atomic stage-then-rename | Signature verification log from binary, staging hash log |

| HIPAA §164.312(a)(1) (access control) | Unique user identification, emergency access | x-user-id on every call, mTLS device cert for PHI-handling roles, sub-sixty-second PAT revocation |

audit_events.user_id, CRL entry timestamp vs denied-row timestamp |

| HIPAA §164.312(b) (audit controls) | Record and examine activity | audit_events with prompt/response payloads, JSON SIEM export |

SQL extract filtered to ePHI tenant, SIEM retention window proof |

| HIPAA §164.312(c)(1) (integrity) | Protect ePHI from improper alteration | Column-level encryption with enterprise KMS, database backup integrity | KMS audit log for decrypt operations, backup integrity report |

| HIPAA §164.312(e)(1) (transmission security) | Guard against unauthorised access in transit | TLS 1.3 on the gateway, mTLS for device-bound auth | TLS config snapshot, mTLS cert fingerprint claim in JWT |

| EU AI Act Art. 9 (risk management) | Risk management system for high-risk AI | Policy bundle plus x-policy-version on every call, routing bound to risk tier |

audit_events.policy_ver distribution, policy-bundle diff log |

| EU AI Act Art. 12 (record-keeping) | Automatically generated logs for traceability | Full audit row before response, trace-ID join across tool calls | Single-query lineage export, six-month retention SQL check |

| EU AI Act Art. 15 (accuracy, robustness, cybersecurity) | Resilience and protection against manipulation | Governance pipeline scope and secret scanning, Ed25519 supply chain | Denied-call distribution with rule ID, signature verification log |

| NIST AI RMF GOVERN-1 | AI risk management integrated into enterprise | Three-team ownership with documented interfaces | RACI chart, change-management ticket log |

| NIST AI RMF MAP-4 | Risks to individuals and society identified | Per-user audit lineage, per-tenant data boundary | Lineage export, tenant-scoped audit query |

| NIST AI RMF MEASURE-2 | Test sets and metrics for AI risks | Policy bundle evaluation logs, per-rule denied counts | Policy evaluation log aggregated by rule ID |

| OWASP Agentic A1 (direct prompt injection) | Mitigate malicious instructions in prompts | Governance pipeline scope, secrets, blocklist before dispatch | Denied-call row with pipeline rule ID in payload |

| OWASP Agentic A5 (supply chain) | Compromised extensions or plugins | Ed25519-signed manifest, atomic rename, revocation list | Signature verification log, revocation manifest diff |

| OWASP Agentic A7 (unauthorised actions) | Agent taking actions beyond user intent | Managed MCP allowlist enforced at gateway and client | Denied MCP-exec row, allowlist snapshot |

| OWASP Agentic A9 (human oversight mechanisms bypass) | Agents circumventing human review | Scoped JWT plus policy bundle, explicit agent session source | call_source = 'subagent' rows, policy-version distribution |

Every row is a question an auditor will ask and an answer the architecture already produces. The compliance evidence pack is a set of SQL queries, file diffs, and config snapshots. None of it requires a feature request to the AI vendor. None of it requires an integration the enterprise does not already run.

The specific evidence an auditor wants changes by engagement. The shape does not. A SOC 2 Type II review asks for system-generated evidence across a period. SQL queries filtered by date range produce it. An ISO 27001 Stage 2 audit asks for sampled evidence against specific controls. Lineage queries sampled at random dates produce it. An EU AI Act conformity assessment asks for technical documentation and logs. The documentation is the gateway config and manifest. The logs are audit_events. A HIPAA security risk analysis asks for evidence that access controls and audit controls are in place and effective. Revocation drills and lineage queries produce it.

An RFP from a Fortune 500 Buyer

An RFP that hits your desk in 2026 from a Fortune 500 buyer looking at Claude Cowork plugins on self-hosted infrastructure will run fifty to eighty questions. The heavy ones are the fourteen in the FAQ at the bottom of this page. The rest are variants and follow-ups. Here is how the substantive themes actually break down, in the order they arrive.

Security posture

The first block of questions is security posture. How is the control plane authenticated. Where the cryptographic material sits. What the revocation path is. What happens on compromise. What the incident-response time is. Whether the implementation has been penetration-tested. Whether there is an SBOM. Whether the supply chain is signed.

The answers to all of these are in the architecture above. Authentication is JWT-backed with three tiers. Signing material sits in HSM or KMS. Revocation takes effect within one TTL window, configurable down to five minutes. Compromise of a gateway that does not hold the signing key cannot push tampered plugins because the clients will reject an unsigned or incorrectly-signed manifest. Incident response is an SQL query plus a policy or manifest push. Penetration testing is a vendor contract line the enterprise owns. SBOM and supply-chain signing follow from the gateway implementation the enterprise ships. None of these answers depend on the AI vendor.

Data residency and processing

The second block is residency. Where prompts live at rest. Where they live in flight. What claims are sent upstream. What contractual commitments govern each. What evidence is produced to prove residency to a regulator.

Prompts at rest live in the enterprise audit_events table, inside the enterprise VPC, encrypted with the enterprise KMS key. In flight, prompts travel over TLS 1.3 from the client to the gateway and over whatever transport the gateway uses to reach the upstream. Claims sent upstream are the model prompt and requested model name. Identity headers are stripped. Contractual commitments for the upstream are the enterprise's existing agreements with Bedrock, Vertex, Azure Foundry, or whichever cloud is routed to. If those agreements are inadequate, the route changes to an in-perimeter upstream. Evidence is a SQL query over the provider column for the audit window.

Commercial terms

The third block is commercial. What is the total cost of ownership. What is the per-seat cost. What is the per-call cost. What the rate-card flexibility is. What the contract exit terms are. Whether there is price protection against upstream changes.

Total cost is one Cowork seat per user plus wholesale inference at the enterprise's chosen upstream. Per-seat cost is what Anthropic charges for the Cowork client. Per-call cost is the upstream's token price times the tokens used, visible in audit_events.cost_micro to the microdollar. Rate-card flexibility comes from the existing upstream contracts the enterprise has negotiated. Contract exit is a route-config edit away; the audit data and policy bundles stay with the enterprise. Price protection against upstream changes comes from reserved-capacity contracts and multi-provider routing, which the gateway supports natively.

Working the commercial case in detail is worth doing before the RFP deadline. A five-thousand-developer organisation at a realistic call profile (fifty calls per developer per day, two-hundred-token average input, eight-hundred-token average output) produces roughly twelve million calls per month and roughly twelve billion tokens per month. At 2026 list rates for Sonnet-class capability, that is a seven-figure monthly bill regardless of provider. The spread between cheapest-available wholesale capacity (Bedrock reservations for Sonnet at volume, Azure enterprise Sonnet equivalents) and direct-Anthropic list price typically falls between 15% and 35% for the same model family at volume. A routing architecture that captures most of that spread is a low-seven-figure annual saving against a baseline of per-seat hosted AI. Finance will run this calculation themselves. The architecture just has to let them.

Compliance and audit

The fourth block maps to the compliance table above. SOC 2 evidence. ISO 27001 evidence. HIPAA evidence if the workload touches PHI. EU AI Act evidence if any covered business unit operates in the EU. Sector-specific evidence for financial services, healthcare, or utilities.

Each has a row in the table. Each row has an audit artefact. The RFP answer for each control is "here is the SQL query that produces the evidence, here is a redacted sample output from our staging environment, here is the retention period". Three artefacts per control. The compliance team runs the same three for every framework they support. Preparing the evidence pack is a one-time exercise; producing it for a given audit is a parameter change.

Operational readiness

The fifth block is ops. Uptime commitments. Disaster recovery. Backup and restore. Performance SLOs. Monitoring and alerting. Incident-response SLAs. Training and change management.

The gateway is an internal HTTP service. Its ops profile is whatever your existing tier-1 services deliver. Uptime commitments are whatever the enterprise's standard is for internal services, typically 99.95% or 99.99%. DR is multi-AZ active/active with a documented failover runbook. Backup and restore is standard Postgres with point-in-time recovery on the audit_events table. Performance SLOs are median latency under two hundred milliseconds on the gateway leg and P99 under a second. Monitoring is the enterprise standard. Training is a runbook and a one-hour onboarding session for operators. Change management is the enterprise pipeline the AI platform team already uses.

Roadmap and support

The sixth block is roadmap. What the vendor commits to. How new Anthropic features land. How new regulations are accommodated. How the enterprise's own engineers can contribute. What the long-term support posture is.

Source-available licensing on the gateway implementation means the enterprise's own engineers can contribute, fork, or extend. New Anthropic features land through the vendor's release pipeline, which moves monthly. New regulations are accommodated through policy-bundle updates and routing changes, which the enterprise controls. Long-term support is a contract line. The ultimate continuity guarantee is the source-available code, which the enterprise retains and can run on internally if the vendor relationship ends. This is the answer to the lock-in question procurement always asks and often hears poorly from hosted-AI vendors.

Cost Model and Procurement

Most Fortune 500 AI programmes have been buying AI at seat-level pricing through 2023, 2024, and 2025. The invoices looked like enterprise SaaS invoices. They do not age well. At fleet scale, the inference cost underneath the seat price has compound curve behaviour that the seat doesn't price in, and the procurement team ends up renegotiating the contract every eighteen months because the assumptions inside it shift.

Self-hosted Cowork changes the procurement motion. The architecture has two cost streams and they are negotiated separately. The client stream is the Cowork seat from Anthropic. The inference stream is whatever provider and contract the gateway routes to. Procurement negotiates each on its own cycle, with its own leverage.

Inference pricing now follows established cloud procurement patterns. Bedrock offers provisioned-throughput contracts for commit-and-save arrangements. Vertex has committed-use discounts. Azure has enterprise agreements that roll AI into the larger MACC commitment. On-prem GPU capacity is straightforward capex and opex. The AI team picks the upstream mix, the finance team negotiates the contracts, and the gateway routes accordingly. This is the same pattern enterprise cloud spend follows. It is familiar to procurement. It has a playbook.

Seat pricing is negotiated on the Cowork client itself, which is the smaller line item at fleet scale. Anthropic's enterprise terms on the client cover SSO integration, support tiers, and usage reporting at the per-user level. These are negotiated once a year through the normal vendor-management cycle.

Running both streams separately produces visible cost advantages at scale because each stream's price dynamic is different. Seats are a function of headcount and grow linearly. Inference is a function of usage and grows with adoption, but moves in both directions with model price changes and routing decisions. Separating them lets finance model each independently. Bundled per-seat AI pricing cannot be modelled this way because the underlying unit economics are hidden.

A realistic 5,000-developer organisation in early 2026 looking at its AI spend sees three options. Bundled per-seat hosted AI, at whatever the vendor is charging that quarter. Split architecture with wholesale inference, at whichever provider mix the enterprise negotiated. Full on-prem inference for regulated or sensitive workloads with wholesale for general use. The second and third options are only available under a self-hosted gateway architecture. The first was the only option through most of 2024 and 2025 and is not cost-competitive at fleet scale in 2026.

Illustrative twelve-month cost comparison for a 5,000-developer organisation at fifty calls per developer per weekday. Assumes two hundred input tokens and eight hundred output tokens per call, Sonnet-class model family, and public-rate inputs as of April 2026. Actual enterprise commitments typically produce fifteen to thirty-five percent improvement against list rates.

| Architecture | Seat cost (per user / year) | Inference cost basis | Per-call cost (USD) | Annual inference | Total annual | Notes |

|---|---|---|---|---|---|---|

| Bundled hosted AI (vendor seat bundles inference) | $600 | Included in seat | n/a | Included | $3,000,000 | No cost-per-call visibility, no multi-provider routing, no per-department chargeback |

| Gateway + Anthropic direct (wholesale) | $240 | Anthropic Messages API list | $0.0054 | $780,000 | $1,980,000 | Routing flexibility available but only one upstream used |

| Gateway + Bedrock reserved | $240 | Bedrock provisioned throughput at enterprise commit | $0.0041 | $590,000 | $1,790,000 | Reserved capacity caps unit cost, handles peak through on-demand failover |

| Gateway + multi-provider routing | $240 | Bedrock for Sonnet-heavy, Anthropic direct for Opus-heavy | $0.0037 weighted | $533,000 | $1,733,000 | Picks cheapest upstream per call, preserves Opus access for high-reasoning paths |

| Gateway + on-prem Llama 70B for internal + Bedrock for external | $240 | On-prem capex amortised + Bedrock for off-cluster | $0.0024 weighted | $346,000 | $1,546,000 | Only viable once capex is committed, regulated workloads stay in perimeter |

The table is not a commitment of savings. It is a shape. The salient line is the first versus the last. Every step down between them represents an architectural choice the enterprise can only make once the gateway sits in the request path. The first architecture has exactly one lever, which is the seat count. The last architecture has every lever that matters for AI cost, and all of them are under the enterprise's control.

Procurement also gets vendor-independence optionality at zero marginal cost. The gateway's route config can swap Bedrock for Vertex in a deployment. It can add a new provider without a client change. It can pull regulated workloads onto on-prem capacity during a specific audit period. Each of those options has value, and in most enterprises the value of optionality against a multi-year AI contract exceeds the engineering cost of the gateway itself.

Ninety Days

An executive sponsor committing to a rollout date needs a ninety-day plan with specific milestones and clear exit gates. The plan below is calibrated for a five-thousand-developer organisation with existing MDM, existing IdP, existing SIEM, and at least one regulated business unit. Scale smaller or larger is straightforward from this baseline.

Weeks 1 to 2. Staging gateway live, routing to Anthropic direct only. Pilot cohort of eight to twelve volunteer engineers on PAT auth. Credential helper deployed locally. audit_events writing. SIEM ingestion validated end-to-end. First revocation drill run and documented. Exit gate: zero unexplained errors in pilot traffic, latency within tolerance.

Weeks 3 to 6. Production gateway live behind load balancer. MDM pilot on pilot-cohort machines through Intune for Mac and Intune for Windows. Session-cookie auth tier enabled. Signed manifest live with one internal plugin the cohort actually wants. MCP allowlist initialised with the security-approved server set. Exit gate: manifest sync working on all pilot devices, MCP allowlist enforced, sample lineage query reviewed by compliance.

Weeks 7 to 10. First ring rollout. One full department, fifty to a hundred fifty engineers, MDM profile pushed. mTLS tier enabled for the regulated business unit. Second upstream provider enabled on the gateway (Bedrock, Vertex, or Azure Foundry). First real agent in the manifest bound to a skill and MCP server set. Exit gate: error rate below 0.5% across the ring, SOC signs off on a week of subagent traffic, cost report from audit_events delivered to finance.

Weeks 11 to 12. Remaining rings cut over. Direct API access revoked at the Anthropic side. Compliance evidence pack produced and walked through with internal audit. External auditor briefed on the rollout for the next interim review. Runbook handed off to the ops rotation. Exit gate: every MDM-enrolled laptop pointing at the gateway, zero client-side support tickets from cutover, finance dashboard showing per-developer and per-provider spend.

Post-rollout. Catalogue curation cadence established. Routing cost tuning cadence established. Quarterly revocation drill scheduled. Quarterly compliance evidence refresh scheduled. Annual key rotation scheduled on the PKI programme's existing cycle.

The ninety days assume a steady-state enterprise with working MDM, IdP, and SIEM. Organisations missing one of those run those rollouts first; this plan does not invent them. Organisations with more complex topology (multi-region, multi-tenant, multi-sovereignty) add ring phases but the shape does not change. The decision points on the plan are the pilot exit gate and the first-ring exit gate. Everything else is a multiple of those.

Where to Go Next

Three technical references sit underneath this page. Read them in order of what your team needs now.

Claude Cowork Deployment reference is the platform-agnostic technical document. Architecture, auth tiers, signed manifest, audit schema, routing table. Read this when you need to explain the architecture to another technical stakeholder without repeating the business case.

Install on macOS and install on Windows are the step-by-step rollout guides. .mobileconfig payloads, Jamf policies, Intune profiles, registry hives, Group Policy ADMX, Task Scheduler templates, launchd templates, troubleshooting. Both cross-link to the Anthropic support articles that define the upstream contract.

Gateway Service is the endpoint and helper-binary reference. /v1/messages, /v1/auth/cowork/*, provider routing, MCP allowlist routes, the credential-helper stdout contract, the CLI surface. Read this when you are standing up the gateway itself.

The RFP question bank is in the FAQ at the bottom of this page. Each answer points at a concrete artefact your evaluation team can exercise in the first week of a proof of concept.