Quick answer

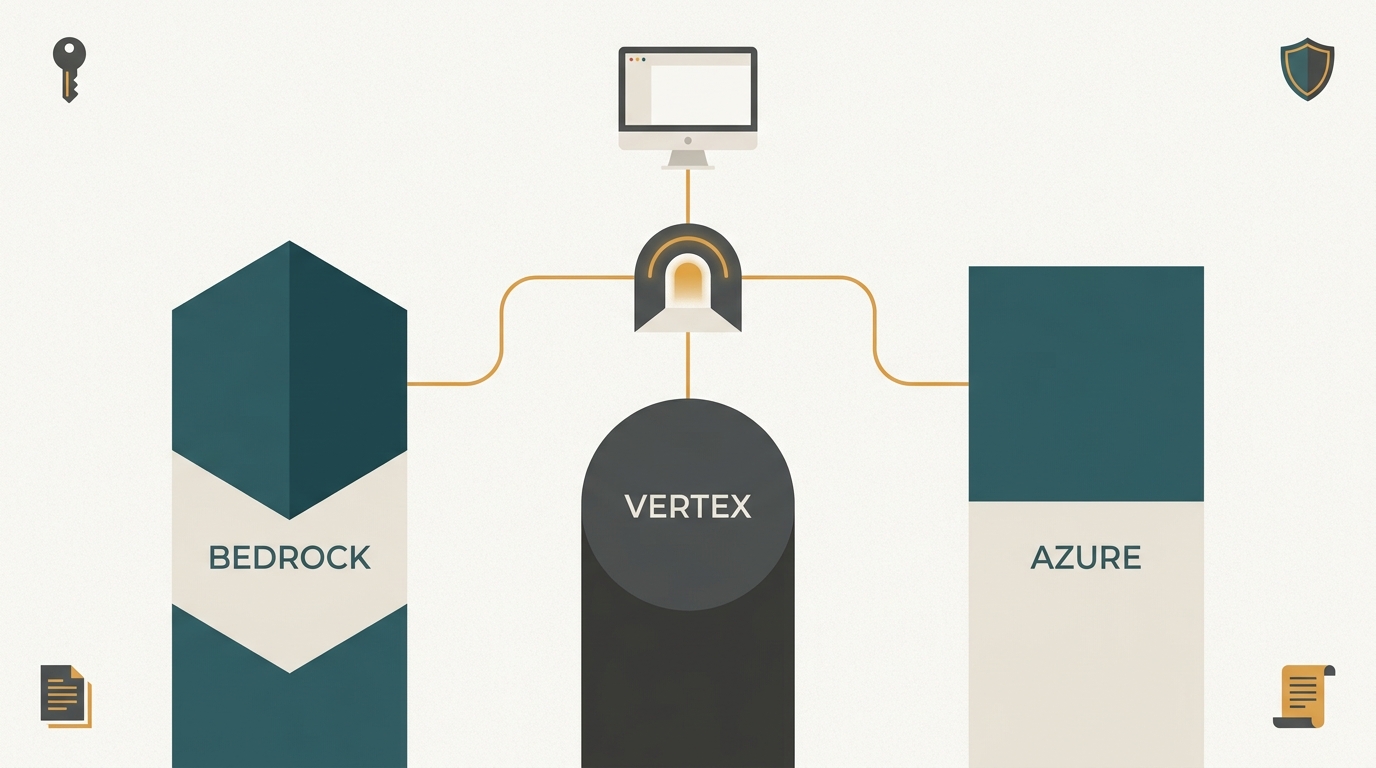

Claude Cowork is a desktop runtime, not a chat product. In April 2026 Anthropic shipped a configuration that lets the enterprise replace Anthropic's hosted inference with any /v1/messages-compatible endpoint (Amazon Bedrock, Google Vertex AI, Azure AI Foundry, Anthropic direct, or a self-hosted gateway), while preserving the plugin, MCP allowlist, and MDM policy controls.

Four control surfaces define every Cowork deployment:

- The LLM gateway the desktop agent calls for inference.

- The MCP allowlist in the MDM policy that gates which tool servers may load.

- The org-plugins folder mounted by MDM that pins approved extensions onto every laptop.

- The MDM policy file itself, which carries the other three settings plus identity, telemetry, and per-tenant overrides.

The gateway is the first decision and the load-bearing one. Every other surface is downstream of it. The rest of this guide is the orientation a CTO, cloud architect, or security lead needs to make that call and to plan a 90-day rollout that survives procurement.

If you have already chosen the path and need a deployment reference with full MDM payloads, JWT headers, signed-manifest workflows, and audit-table schemas, the companion piece /guides/claude-cowork-plugins-enterprise is the playbook. This page is the orientation.

What changed in April 2026

On 2026-04-22 Anthropic published three support articles documenting Claude Cowork against third-party inference platforms: 14680729 on the gateway and inference contract, 14680741 on plugins and MCP servers, and 14680753 on the MDM policy reference. Read together, they describe a single shift: the enterprise stops being a tenant of Anthropic's API and starts being the operator of its own AI platform, with Anthropic as one of several model providers behind it.

This sounds like a small configuration change. It is not. It moves the inference boundary, the audit boundary, the cost-attribution boundary, and the data-loss-prevention boundary from Anthropic's perimeter into the enterprise's perimeter. Every control that an enterprise governance team already owns for its other production systems (identity, audit, secret scanning, rate limits, regional residency, cross-provider failover) now applies to AI inference too, if the deployment is wired up to use it.

The April 2026 change explains why Cowork suddenly matters to buyers who had previously written it off as "another SaaS chat product." It is no longer a SaaS chat product. It is a desktop runtime that connects to the enterprise's own AI infrastructure.

What Claude Cowork actually is

Claude Cowork is a desktop runtime. It runs as a long-lived agent process on the employee's laptop. The runtime ships with:

- Persistent task context that survives across files, applications, and sessions on the local machine.

- Tool use through MCP servers, the same Model Context Protocol used by Claude Code, but wired into the desktop OS instead of the terminal.

- Plugins, which are signed, versioned bundles that ship custom skills, prompts, and integrations to every employee through the org-plugins channel.

- An admin surface controlled by an MDM policy file that pins the inference gateway, the MCP allowlist, the org-plugins folder, and a long list of safety knobs (clipboard access, network reach, file paths, telemetry destinations).

This is the load-bearing distinction: Claude Code is for engineers, Claude Cowork is for everyone else. Analysts, lawyers, ops, finance, customer service. Running on their own laptops. With the security team in charge of what the runtime can do and which extensions it can load.

The desktop nature matters. A SaaS chat product never touches the enterprise endpoint. A desktop runtime does. It reads files, calls local applications, talks to internal services on the corporate network, and lives inside the existing MDM and EDR control plane. Treating Cowork like a SaaS app is the most common deployment mistake.

The four control surfaces

Every Cowork deployment decision lives in one of four places. Get these four right and the rest is operational detail. Get them wrong and the deployment is a fleet of unmanaged AI agents with file access.

| Surface | What it controls | What it prevents | Owned by |

|---|---|---|---|

| LLM gateway | Which /v1/messages endpoint Cowork calls for inference |

Inference traffic going to a vendor the enterprise has not approved | Platform engineering, finance |

| MCP allowlist | Which MCP server identifiers Cowork is permitted to load | Employees connecting Cowork to unsanctioned tool servers and exfiltrating data through tool calls | Security architecture |

| org-plugins folder | Which plugins are pinned on every laptop, by signing key and minimum version | Removal of approved plugins by users; installation of unsigned or stale plugins | Security operations |

| MDM policy file | The other three plus identity, telemetry, network egress, clipboard reach, file scope, per-tenant overrides | Deployment drift between laptops; configuration that lives in someone's head | Endpoint engineering |

The four surfaces are not equally weighted. The gateway is the first decision and shapes everything else. The MCP allowlist is the most consequential single line of policy in the MDM file. The org-plugins folder is the supply chain. The MDM policy file is the version-controlled artefact that ties them together.

Surface 1: the LLM gateway

The MDM policy specifies the URL Cowork sends inference requests to, plus the auth method. Until April 2026 this had to be api.anthropic.com. Now it can be any of:

- Amazon Bedrock. Claude on Bedrock's Anthropic-compatible Messages endpoint, documented in the Bedrock model parameters reference. IAM auth, CloudTrail audit, AWS billing.

- Google Vertex AI. Claude on Vertex via the Anthropic partner integration. Service-account auth, GCP audit logs, regional endpoints for EU data residency.

- Azure AI Foundry. Claude on Foundry's models-as-a-service tier, documented in the Foundry models overview. Entra ID auth, Purview audit integration, unified billing for Microsoft 365 customers.

- Anthropic direct. The original path. Still the simplest if there are no compliance constraints.

- A self-hosted gateway. A service the enterprise operates that fronts one or more of the above. This is the surface where governance, audit normalisation, and cost control become genuinely enterprise-grade.

Pick the gateway path before anything else. Every other decision flows from it.

Surface 2: the MCP allowlist

The MDM policy lists which MCP server identifiers Cowork is allowed to load. Anything not in the list is refused, even if the user manually configures it in their personal settings. Glob patterns are supported (com.example.* matches any MCP server with that prefix).

The allowlist is the difference between two states of the world. In one, employees can connect Claude to anything (internal services, third-party SaaS APIs, personal tools) and the security team has no visibility. In the other, employees can connect Claude to the exact set of tool servers the security team has reviewed. For most organisations the starter policy is a tiny allowlist of internal MCP servers plus two or three trusted vendors, expanded by request through the existing application-approval process.

If only one line of the MDM policy gets reviewed by the security team, it should be this one.

Surface 3: the org-plugins folder

Cowork reads plugins from org-plugins/ (an MDM-mounted, read-only folder) in addition to the user's personal plugins. Plugins in org-plugins/ are pinned and cannot be removed. This is where the security team ships custom skills, prompt libraries, internal-tool integrations, and approved third-party extensions.

Plugins are signed. The MDM policy specifies allowed signing keys and minimum versions. Revocation is centralised: revoke a signing key in the MDM policy and every laptop drops the plugin within a single MDM sync cycle, typically 30 to 60 minutes depending on the platform. Treat the signing-key list as a secrets-management asset; treat the minimum-version field as a kill switch.

Surface 4: the MDM policy file itself

The policy file is a structured document delivered through the existing MDM channel (Microsoft Intune, Jamf Pro, VMware Workspace ONE, Kandji, Mosyle). The Anthropic MDM reference at 14680753 lists every key the file accepts. The keys cover:

- Gateway URL and auth method.

- The MCP allowlist.

- The org-plugins signing keys, minimum versions, and folder mount path.

- Network egress restrictions: which domains the runtime may reach.

- Clipboard and file-system scope: which directories the runtime may read or write.

- Telemetry destinations: where event logs flow.

- Per-tenant overrides for organisations running multiple business units on shared hardware.

Treat the MDM policy as code. Version-control it. Review it like a firewall rule set. Keep a change log. The MDM policy file is the single artefact a procurement reviewer or compliance auditor will ask to see; it is the document that proves the deployment is configured rather than improvised.

Mapping Cowork to your cloud

The five inference paths are not interchangeable. Each one optimises for a different starting condition.

| Path | Auth | Audit destination | Best fit | Caveat |

|---|---|---|---|---|

| Amazon Bedrock | IAM roles, STS short-lived credentials | AWS CloudTrail | AWS-first orgs with existing IAM, billing, and CloudTrail tooling | Single-cloud lock-in; no native cross-provider failover |

| Google Vertex AI | GCP service accounts, Workload Identity | Cloud Logging, Cloud Audit Logs | GCP-first orgs; EU data-residency mandates (europe-west1, europe-west4) | Single-cloud lock-in; partner-model latency tail can be wider than direct |

| Azure AI Foundry | Microsoft Entra ID tokens | Microsoft Purview, Sentinel | Microsoft 365 shops; orgs that already pay Azure | Single-cloud lock-in; model availability lags Anthropic and Bedrock |

| Anthropic direct | Anthropic API key | Anthropic console | No-compliance pilots; the lowest-effort starting point | No native enterprise IAM; audit lives off-prem |

| Self-hosted gateway | Federated (any of the above plus internal IdP) | The buyer's SIEM, the buyer's cost system | Multi-cloud, multi-model-family, governance-heavy enterprises | Operational cost: someone has to run the gateway |

The first three rows are vendor-managed. They optimise for orgs that have already standardised on one cloud and want one more capability inside that cloud's perimeter. The fourth is the lowest-effort pilot path. The fifth is where the enterprise decides what "good governance" looks like in its own terms.

The decision criterion is not technical capability. All five paths can serve Cowork inference. The criterion is what the enterprise wants to own: billing and audit single-pane on the cloud the org already runs, or governance single-pane across providers. That choice is downstream of how the org is organised, not of how Cowork is built.

When Bedrock is the right answer

Bedrock is the default for AWS-first organisations because the deployment work is mostly already done. Identity is IAM, which is already integrated with the corporate IdP. Audit flows into CloudTrail, which is already feeding the SIEM. Billing rolls up under the existing AWS account, which procurement has already approved. Network reach is controlled by VPC routing, which the platform team already owns.

The trade-off is provider lock-in. A Bedrock-fronted Cowork deployment cannot transparently fall back to Vertex if Bedrock has an outage in the relevant region. Cross-region failover within Bedrock works; cross-provider failover does not without an additional layer in front.

When Vertex is the right answer

Vertex is the answer when EU data residency is a non-negotiable. Vertex regional endpoints in europe-west1 (Belgium) and europe-west4 (Netherlands) keep inference traffic and logs inside EU jurisdiction. The integration with Workload Identity means service accounts can be bound to specific GKE workloads, which is the cleanest IAM pattern for an enterprise running Cowork-adjacent services in GCP. The same lock-in caveat as Bedrock applies.

When Azure Foundry is the right answer

Azure Foundry is the natural answer for organisations whose AI strategy is already a Microsoft strategy. Entra ID for identity. Purview for audit and DLP. Sentinel for SIEM. Conditional Access for risk-aware login. M365 commercial agreements for billing. If the rest of the stack is Microsoft, fighting the Microsoft path costs more than it saves. The model availability caveat (Anthropic ships new models on Anthropic direct first, Bedrock second, Foundry third) matters for orgs that need the newest model the day it ships, not at all for orgs that adopt models on a quarterly cycle.

When Anthropic direct is the right answer

For a 50-seat pilot with no compliance gating, Anthropic direct is the fastest path. API key, gateway URL, plugins, MCP allowlist. Three days from purchase order to working deployment. The pilot validates the product; the production rollout chooses one of the other four.

When a self-hosted gateway is the right answer

A self-hosted gateway is the right answer when one of these is true:

- The enterprise wants one audit pane across multiple providers, not three separate audit panes.

- The enterprise needs cross-provider failover or wants to route specific workloads to specific providers (regulated workloads on-prem, general workloads on Bedrock).

- The enterprise has governance requirements that no single provider satisfies, for example a custom secret-scanning pattern set, or a tenant-isolation model that does not map cleanly onto IAM, or a cost-attribution scheme that ties usage back to project codes that exist only in the corporate ERP.

- The enterprise's compliance team has decided that AI inference must pass through a controlled gateway before leaving the perimeter, the same way egress traffic does for everything else.

The trade-off is operational cost. A gateway is a service. Someone has to run it, monitor it, scale it, patch it, and answer the pager when it breaks. That cost has to be weighed against the value of the audit, governance, and cross-provider properties the gateway makes possible.

Once those properties matter, no point integration replaces them.

A 90-day rollout plan

The shape that survives contact with regulated buyers separates into three thirty-day phases. Each phase has a goal, a success criterion, and a drill.

| Phase | Goal | Success criterion | Drill |

|---|---|---|---|

| Days 1-30, Pilot | Pick the inference path, draft the MDM policy, deploy to 50 friendly users | A single Cowork-issued /v1/messages request appears in the chosen audit destination with a recoverable trace_id and a known user identity |

Deploy the policy, then change the gateway URL in the MDM. Confirm the change reaches every laptop within one MDM sync cycle |

| Days 31-60, Expansion | Expand to 500 seats, wire audit logs into the SIEM, add cost dashboards, prove failover | The security analyst (not the platform team) can answer "who used Claude this morning" from the SIEM in under five minutes | Tabletop revocation: at 10:00, revoke a plugin signing key in the MDM. Confirm every laptop drops the plugin by 11:00. Document the timing |

| Days 61-90, Fleet | Roll out to the rest of the fleet, finalise data-residency posture in writing, produce procurement-grade documentation | The compliance team can hand a one-page Cowork deployment summary to an external auditor without preparation | Tabletop offline: simulate the gateway being unreachable. Confirm Cowork fails closed (no inference), surfaces a clear user-facing error, and the fleet recovers within minutes when the gateway returns |

The drill column is the part that matters. A rollout without revocation and offline drills is a rollout that has not been tested. Both drills should be run before procurement is asked to sign off, because procurement will ask, and "we plan to" is not an answer.

Phase 1 in more detail

The first thirty days are about commitment. The path decision (Bedrock, Vertex, Azure, Anthropic, self-hosted) is finalised. The MDM policy is drafted with a minimal MCP allowlist (one or two internal servers, no third-party servers yet) and an org-plugins set with one or two approved skills. The pilot ring is fifty users picked because they will tell you when something is broken: early-adopter engineers, internal champions, the security team itself. Telemetry flows somewhere; even if the SIEM integration is not wired up yet, logs land in a known location with retention policy attached.

By day 30 there is a working deployment, and the question "where do I look to see what Cowork did this morning?" has a one-sentence answer.

Phase 2 in more detail

The second thirty days are about turning the deployment into operations. The SIEM gets a Cowork data source. The cost dashboard reads inference billing and joins it to user identity. The MCP allowlist expands by request rather than by guess; every addition has a ticket, an owner, and a sunset date. A second model provider is wired up behind the gateway (if a self-hosted gateway is in play) and failover is tested with a switched route.

The revocation drill happens late in phase 2 because by then the org-plugins set is non-trivial and revoking the wrong one is a learning experience worth having before the fleet rollout.

Phase 3 in more detail

The third thirty days are about defensibility. The deployment exists; the question is whether someone can explain it to an external auditor in ten minutes. The data-residency posture is written down: which provider, which region, what the contract says, what the technical configuration enforces, what audit evidence proves the configuration is enforced. The procurement-grade documentation answers the standard set: what is logged, who can read it, how revocation works, what happens when the gateway is offline, who pays for usage, how a leaving employee is removed. Each answer cites a specific control surface and a specific piece of evidence in the SIEM or the MDM repo.

By day 90 the deployment is not just running. It is ready to be reviewed.

What "ready to be reviewed" looks like in practice

The shape of a defensible Cowork deployment is small enough to fit in a one-page summary that procurement, audit, and security can all sign off on the same week. The summary names the inference path with its provider and region, the auth method and the IdP that backs it, the audit destination and the retention policy on it, the MCP allowlist and the change-control process for adding to it, the org-plugins set and the signing keys behind each plugin, and the data-residency posture in plain language.

A reviewer wants four things from that page. They want to know what is enforced (the technical configuration), what is logged (the audit destination and shape), how the configuration is changed (the change-control loop), and what happens when something goes wrong (the failure modes and the on-call response). A deployment that cannot produce those four answers in writing is a deployment that has not been finished, regardless of how many seats are running.

Cowork against the alternatives

The Cowork decision rarely happens in isolation. Most organisations are simultaneously evaluating ChatGPT Enterprise, Microsoft Copilot, and one or two niche desktop agents. The category differences matter more than the feature lists.

ChatGPT Enterprise is a hosted SaaS. The inference, the conversation history, the integrations, and the audit live on OpenAI's perimeter. The pricing model is per-seat. The customisation surface is prompts, retrieval connectors, and a closed extension catalogue. The buyer is paying for a managed product. Procurement reviews are simpler, deployment time is shorter, and the depth of customer-controlled control is shallower.

Microsoft Copilot is tightly integrated with Microsoft 365. Identity is Entra. Audit is Purview and Sentinel. Data flows inside the Microsoft data boundary by default. The customisation surface is Copilot Studio. For a Microsoft-first organisation the integration story is excellent; for an organisation that wants vendor-neutrality across cloud providers, Copilot is the wrong shape.

Niche desktop agents (the long tail of "Claude/GPT-on-the-desktop" wrappers) typically run on consumer-grade APIs without an MDM control surface. They are useful for individuals and small teams. They do not have the org-plugins, MCP allowlist, and MDM policy story that an enterprise rollout requires.

Claude Cowork sits in a different category from all three. It is a desktop runtime with explicit enterprise control surfaces, where the enterprise owns the inference boundary. The closest comparison is "running your own AI agent platform with Anthropic as the model provider" rather than "consuming a SaaS chat product". Buyers who are comfortable consuming SaaS will find Cowork's deployment surface unfamiliar. Buyers who already operate complex desktop fleets through MDM and complex API estates through gateways will find Cowork's surface natural. It slots into the categories they already manage.

The trade-off is direct. SaaS chat products require less configuration and offer less control. Cowork requires more configuration and offers more control. The right answer is whichever side of that trade-off the organisation has the operational maturity to absorb.

Where systemprompt.io fits

systemprompt.io is a self-hosted /v1/messages gateway designed for the Cowork third-party deployment surface. It runs in the customer's VPC, supports Bedrock, Vertex, Azure Foundry, and Anthropic direct as upstream providers, and adds:

- A four-stage governance pipeline on every Cowork inference call and every MCP tool call: scope checks, secret scanning across more than thirty patterns, blocklists, and rate limits. This is the same pipeline that runs for any other AI traffic the enterprise routes through it.

- An audit spine that links every request to the user identity, the model, the token counts, the cost, and the MCP tool-call trace under a single

trace_id. Audit rows survive provider switches; the trace_id is stable across Bedrock, Vertex, Azure, and Anthropic direct. - Multi-tenant isolation so multiple business units share infrastructure without sharing data, with per-tenant policy overrides that override the corresponding sections of the MDM policy at the gateway boundary.

- A cost-attribution layer that maps usage back to user, team, and project codes from the corporate ERP, not just the cloud provider's tagging schema.

The decision criterion is the multi-provider, multi-tenant, multi-policy case described above. If the enterprise has already standardised on one cloud and wants one capability more inside it, a direct provider integration is the simpler answer. If procurement asks for one audit pane across providers, or if compliance asks for fleet-wide revocation of an extension in under an hour, the self-hosted gateway path is the one that survives the question.

The deep walkthrough (fourteen-question RFP bank, SOC 2 / ISO 27001 / HIPAA / EU AI Act mapping, ninety-day rollout with drill scripts, audit-table SQL, signed-manifest workflow) lives at /guides/claude-cowork-plugins-enterprise. This page is the orientation; that page is the playbook. Governance posture references like the EU AI Act and the NIST AI Risk Management Framework anchor the compliance mappings in the deep guide.

Common questions buyers raise in week one

A short selection of the questions that come up in the first week of every evaluation, with the short answer.

"Do we have to migrate everything at once?" No. Phase 1 is a pilot ring. The fleet rollout in phase 3 happens after the deployment has been measured in production for sixty days.

"What happens if the gateway goes down?" Cowork fails closed for inference; the desktop runtime stays up but cannot complete a model call until the gateway returns. The drill in phase 3 verifies this. A self-hosted gateway should run as an HA service with at least two replicas and explicit recovery objectives.

"Can we change provider mid-rollout?" Yes, and this is one of the reasons a gateway exists. Direct provider integrations make the change harder. The MDM policy file with a single gateway URL plus the gateway's route table makes the change a config edit.

"How do we know the MCP allowlist is enforced?" The drill in phase 2. Try to load an MCP server that is not in the allowlist; confirm the runtime refuses, the user sees a clear error, and the attempt is logged.

"Where does the cost show up?" Every gateway path produces a billing record under that gateway's billing system. A self-hosted gateway can normalise these into one cost record per user per project; a single-provider integration produces one billing record under the chosen cloud. The cost dashboard in phase 2 builds on whichever shape applies.

Conclusion

If you are deploying Claude Cowork at any meaningful scale, the gateway is the decision; the MCP allowlist, org-plugins folder, and MDM policy are the consequences. Pick the path that fits the org's existing operational shape, then plan a defensible 90-day rollout that ends with a deployment a compliance auditor can review on demand.

Read /guides/claude-cowork-plugins-enterprise when the orientation is clear and the work moves from "which path" to "how exactly". Read /guides/claude-code-mcp-servers-extensions for the MCP protocol reference that informs the allowlist work. Read /guides/self-hosted-ai-governance for the broader governance posture that frames why the gateway choice matters in the first place.