Prelude

We have rolled out Claude Code across three organisations now. The first time, every mistake in this guide was made firsthand. Fifty developers were given access on the same day, with no training, no guardrails, and the result was predictable. Half of them abandoned it within a week while the other half used it in ways that made the security team very nervous.

The second time was better. A small team was picked, managed settings were configured, audit logging was set up, and expansion happened slowly. Adoption stuck. Developers actually changed how they worked instead of just trying it for an afternoon and going back to their old habits.

The third time, there was a proper playbook. It took four weeks from pilot to organisation-wide deployment, and six months later, usage is still climbing. That playbook is what this guide shares, covering every step of a successful Claude Code rollout for enterprise AI deployment.

Rolling out any development tool across an organisation is difficult. Rolling out an AI-powered tool that can execute shell commands, edit files, and interact with your codebase is a different challenge entirely. You need technical controls, yes. But you also need cultural buy-in, training plans, feedback loops, and clear success metrics. Skip any of those and you end up with either a ghost deployment that nobody uses or a shadow deployment that nobody controls.

The Problem

This playbook covers the full Claude Code organisation rollout: from pilot team selection through managed settings configuration, organisation settings in the Claude console, MDM deployment, audit hooks, cost controls, and the phased expansion to 50 or more developers. Every section maps to a real decision you will face, in the order you will face it.

The temptation with Claude Code is to treat it like any other developer tool. Send an email, share a download link, maybe write a wiki page with some tips. Let developers figure it out on their own. After all, they are smart people. They can read documentation.

This approach fails for several reasons. First, Claude Code is not a passive tool like a text editor or a git client. It actively makes decisions, executes commands, and modifies your codebase. Without proper configuration, every developer gets a blank canvas with maximum permissions.

Some will use that responsibly. Others will accidentally run destructive commands, commit generated code without review, or burn through API credits on experiments that go nowhere.

Second, Claude Code's value compounds with shared configuration. When every developer on a team uses the same CLAUDE.md file, the same hook configurations, and the same permission policies, the AI behaves consistently across the entire codebase. When everyone rolls their own setup, you get inconsistent behaviour, duplicated effort, and no way to enforce standards.

Third, the cost model requires attention at scale. A single developer on a Max plan at $200 per month is manageable. Fifty developers without spending awareness can generate costs that trigger uncomfortable conversations with finance. Without model selection policies and usage guidelines, some developers will default to the most expensive model for every task, including ones where a lighter model would work perfectly well.

The solution is not to restrict Claude Code into uselessness. It is to deploy it thoughtfully, with the right controls in the right places, and enough flexibility for developers to actually benefit from it.

The Rollout Playbook

Why You Need a Rollout Plan

The argument against planning comes up frequently. "Just give everyone access and let them experiment." This sounds reasonable until you consider what "experimenting" means with a tool that has shell access to your development environment.

A rollout plan does three things. It limits blast radius while you learn. It creates a feedback loop so you can improve the configuration before scaling. And it builds internal champions who can help onboard the next wave of developers.

Without a plan, you get what we call the "day two problem." Day one is exciting. Everyone installs Claude Code, tries a few prompts, and is impressed. Day two, the novelty wears off. The developers who had a bad first experience (a confusing error, a destructive command, a slow response) quietly uninstall it.

The developers who had a good experience keep using it, but without guidance, they develop habits that do not scale. By day thirty, you have fragmented adoption with no consistency and no way to measure whether the investment is paying off.

A phased rollout avoids this entirely. You start small, learn fast, fix problems early, and expand with confidence.

Picking the Right Pilot Team

Your pilot team is the most important decision in the entire rollout. Pick the wrong team and you will either get false positives (a team so enthusiastic they would adopt anything) or false negatives (a team so resistant they would reject anything).

We recommend a team of three to five developers with a mix of experience levels. You want at least one senior developer who understands the codebase deeply and can evaluate whether Claude Code's suggestions are good. You want at least one mid-level developer who represents your typical team member. And if possible, you want one junior developer, because juniors often get the most dramatic productivity gains from AI assistance and will surface usability issues that seniors work around without noticing.

The pilot team should be working on a real project, not a toy experiment. Claude Code's value shows up in real-world complexity, not in isolated exercises. Ideally, the project involves the typical mix of feature development, bug fixes, code reviews, and documentation that your organisation handles every day.

Give the pilot team a clear mandate. They are not just using Claude Code. They are evaluating it. Ask them to keep notes on what works, what frustrates them, what they wish was different, and what they would tell the next team. This feedback is the raw material for your configuration decisions.

A pilot typically runs for two to three weeks. That is long enough for developers to get past the novelty phase and into real daily usage, but short enough to maintain momentum for the broader rollout.

Setting Up Managed Settings for Central Control

This is where the technical rollout begins. Managed settings give you centralised control over Claude Code's behaviour across every developer machine in your organisation. Instead of hoping each developer configures things correctly, you push a configuration file that sets sensible defaults and enforces critical policies.

The managed settings file is called managed-settings.json. On macOS, you deploy it via MDM (Mobile Device Management) to /Library/Application Support/Claude/managed-settings.json. On Linux, it goes to /etc/claude/managed-settings.json. The full settings hierarchy is documented in Anthropic's managed settings reference. On Windows, you use Group Policy Objects to place it in the appropriate system directory.

Here is a starting point for your managed settings.

{

"permissions": {

"defaultPermissionMode": "acceptEdits",

"allowedTools": [

"Read",

"Edit",

"Write",

"Glob",

"Grep",

"Bash"

],

"blockedTools": [

"WebFetch"

]

},

"settings": {

"model": "claude-sonnet-4-20250514"

}

}

The defaultPermissionMode is your most important setting. There are three options:

Permission Mode Comparison

| Mode | File Reads | File Writes | Command Execution | Recommended for |

|---|---|---|---|---|

ask |

Prompt | Prompt | Prompt | High-security repos, production services |

acceptEdits |

Auto | Prompt | Prompt | Most organisations on initial rollout |

bypassPermissions |

Auto | Auto | Auto | Internal tools repos, experienced teams only |

Data source: Anthropic Claude Code Settings documentation, as of 2026-04. Permalink: systemprompt.io/guides/claude-code-organisation-rollout#permission-mode-comparison.

"ask" requires manual approval for every tool call. Safe but slow, and developers will find it frustrating for daily use. "acceptEdits" auto-approves file reads and searches but prompts for file writes and command execution. This is the sweet spot for most organisations.

"bypassPermissions" auto-approves everything. This is not recommended for an initial rollout, even with experienced teams. The settings hierarchy matters. Managed settings always take precedence over user settings, which take precedence over project settings. This means developers can still personalise their experience within the boundaries you set. They cannot override your blocked tools or raise their permission level above what you have allowed. The full settings hierarchy and advanced configuration patterns are covered in our guide on enterprise Claude Code with managed settings.

Start with a conservative configuration for the pilot. You can loosen restrictions as you gain confidence. It is much easier to grant more access than to take it away.

Deploying Claude Code via MDM on macOS, Linux, and Windows

Hand-copying managed-settings.json to fifty laptops is not a rollout. It is a support ticket waiting to happen. Use your existing device management channel so the file lands automatically on every developer machine and updates itself when you change it.

macOS with Jamf, Kandji, or Intune. Package the file into a configuration profile that writes to /Library/Application Support/Claude/managed-settings.json. Jamf's "Files and Processes" payload handles this directly. Kandji's "Custom Script" profile can run a short shell script that checks the file hash and replaces it if it drifts. Intune uses a "Shell script" policy with the same approach. Target the profile to the developer scope, not the whole fleet, so finance laptops do not inherit developer permissions.

Linux with Ansible, Chef, or Puppet. Treat the file as a managed resource with a SHA checksum. An Ansible copy task pointed at /etc/claude/managed-settings.json with mode: 0644 and owner: root is three lines. Puppet and Chef users already have a file resource pattern. The important part is that developers cannot write to /etc/claude/ with their regular user, so the file stays authoritative.

Windows with Group Policy or Intune. Use a Group Policy Preference file action to drop the file in %ProgramData%\Claude\managed-settings.json. Intune works the same way through a "Win32 app" package or a PowerShell script deployment. Scope the policy to your developer security group.

Version the managed-settings.json file in git. Every change goes through pull request review like any other infrastructure change. This matters because a typo in the permissions block can either break every developer's Claude Code session or silently grant more access than you intended.

Sending Claude Code Telemetry to Your Observability Stack

If you want hard numbers on adoption without writing your own audit service, Claude Code emits OpenTelemetry data. The claude code open telemetry configuration is a managed setting, not a CLI flag, which means you roll it out the same way you roll out permissions.

Set the OTEL_EXPORTER_OTLP_ENDPOINT environment variable (per the OpenTelemetry specification) to your collector in the managed settings env block and Claude Code starts pushing spans for every session, tool call, and model request. Pipe the collector into Datadog, Honeycomb, Grafana Cloud, or a self-hosted Tempo and Prometheus stack. Session duration, tool-call counts, token counts, model selection, and error rates all show up as standard OTEL attributes, so you can build dashboards without parsing custom log formats.

For organisations that already have a SIEM, run the OTEL collector as a tee so the same spans flow to both your observability stack and your compliance store. This keeps the security team and the platform team looking at the same source of truth instead of arguing about which dashboard is right.

Configuring the Permissions Model

The permissions model in Claude Code is more nuanced than a simple allow/deny list. Understanding the layers will save you from both over-restricting your developers and leaving security gaps.

The allowedTools list defines which tool categories Claude Code can use. The core tools are Read, Edit, Write, Glob, Grep, and Bash. Beyond these, there are MCP (Model Context Protocol) server tools that extend Claude Code's capabilities. For your initial rollout, we recommend allowing only the core tools and adding MCP tools selectively as teams request them.

The blockedTools list is your deny list. It takes precedence over allowedTools. If a tool appears on both lists, it is blocked. Use this for tools that you know are inappropriate for your environment. For example, if your organisation prohibits external network requests from development machines, block WebFetch.

Beyond the tool-level controls, you can use match_tool_name patterns in hooks to create more granular policies. For example, you might allow the Bash tool in general but hook into PreToolUse to block specific commands like curl or wget that could exfiltrate data. This gives you the flexibility of Bash access with specific guardrails around the commands you are concerned about.

Permission modes can also be set per-project using .claude/settings.json in the project root. This lets different projects have different risk profiles. Your internal tools repository might allow bypassPermissions while your production services repository requires ask mode for every command execution.

Setting Up Hooks for Audit Trails

If managed settings are your guardrails, hooks are your visibility layer. In a multi-developer deployment, you need to know what Claude Code is doing across your organisation. Not to spy on developers, but to detect patterns, catch issues early, and demonstrate compliance.

The hooks system (documented in Anthropic's Claude Code hooks reference) supports several event types. For audit purposes, the ones you care about most are PreToolUse, PostToolUse, and PostToolUseFailure.

Here is a PostToolUse hook that logs every tool execution to a central HTTP endpoint.

{

"hooks": {

"PostToolUse": [

{

"type": "http",

"url": "https://audit.internal.yourcompany.com/claude-code/events",

"timeout_ms": 5000,

"matcher": {

"tool_name": "*"

}

}

]

}

}

This hook fires after every successful tool call and sends the full context, including the tool name, input, output, and session metadata, to your audit endpoint. The timeout_ms setting ensures that a slow or unavailable audit service does not block the developer's workflow. If the endpoint does not respond within five seconds, the hook fails silently and Claude Code continues.

For compliance-sensitive environments, you might also want a PreToolUse hook that blocks certain operations entirely.

{

"hooks": {

"PreToolUse": [

{

"type": "command",

"command": "/usr/local/bin/claude-policy-check",

"timeout_ms": 3000,

"matcher": {

"tool_name": "Bash"

}

}

]

}

}

The claude-policy-check script receives the proposed command as JSON on stdin, evaluates it against your organisation's policy, and returns a JSON response with a permissionDecision field. If the script returns "deny", the command is blocked before execution. This is where you enforce rules like "no database migrations from Claude Code" or "no production SSH from development machines."

Deploy these hooks via managed settings so they apply to every developer automatically. Hooks defined in managed settings cannot be overridden by user or project settings.

Phased Rollout from Pilot to Organisation-Wide

With your pilot complete and your configuration refined, it is time to expand. The full timeline from pilot to organisation-wide availability typically runs four to six weeks:

Rollout Phase Reference

| Stage | Duration | Team size | Target KPI | Gate criteria to advance |

|---|---|---|---|---|

| Pilot | Weeks 1-2 | 3-5 devs | 80% of pilot users hit 3+ sessions per week | Managed settings deployed, audit hook delivering 100% of tool calls, pilot retro signed off |

| Expand (Department) | Weeks 3-4 | 10-20 devs | Weekly active >= 70% of provisioned seats | CLAUDE.md template in place, <5% permission-denial rate, cost per active dev within forecast |

| Expand (Cross-dept) | Weeks 5-6 | 30-60 devs | Weekly active >= 60%, satisfaction >= 7/10 | Department-specific CLAUDE.md merged, cost dashboard live, policy-check hook blocking zero false positives |

| Embed (Org-wide) | Week 7+ | 50-200+ devs | Weekly active >= 55% sustained for 4 weeks | Self-service onboarding runbook, MDM auto-provision, named owner with named backup |

Data source: phased adoption targets derived from Anthropic Claude Code enterprise documentation and the playbook methodology captured in this guide, as of 2026-04. Permalink: systemprompt.io/guides/claude-code-organisation-rollout#rollout-phase-reference.

We recommend three phases after the pilot.

Phase 1, department rollout. Expand from your pilot team to the rest of their department. This typically means ten to twenty developers. The pilot team members become your internal champions. Pair each new developer with a pilot team member for their first week. This peer support is more effective than any documentation you could write.

During this phase, monitor your audit logs closely. Look for patterns that suggest confusion (repeated permission denials, unusual tool usage, high error rates). These patterns tell you where your configuration or training needs adjustment.

Phase 2, cross-department expansion. Bring on two or three additional departments simultaneously. By now, you should have refined your managed settings, updated your CLAUDE.md templates, and built a small library of useful hooks. Each new department gets the same onboarding package but with department-specific CLAUDE.md files that reflect their tech stack and conventions.

This is also when cost management becomes critical. More on that in a later section.

Phase 3, organisation-wide availability. Make Claude Code available to all developers. By this point, you should have a self-service onboarding process. New developers should be able to install Claude Code, receive managed settings automatically, and find team-specific CLAUDE.md files in their repositories. The system should work without manual intervention from your platform team.

Each phase should last one to two weeks. Rushing phases leads to the same problems as no plan at all. The value of phasing is not just controlling risk. It is building the organisational knowledge and support structures that make adoption sticky. Once your team is comfortable with local Claude Code usage, integrating Claude Code GitHub Actions for automated PR review and CI into your repositories extends the value from individual productivity to team-wide code quality.

Creating CLAUDE.md Standards for Consistency

When every team writes their own CLAUDE.md from scratch, you get inconsistency. One team writes a twenty-line file with just build commands. Another writes a five-hundred-line file that tries to encode their entire architecture. Neither approach works well at scale.

Create a CLAUDE.md template for your organisation. At minimum, it should include sections for build and test commands, coding standards, project structure overview, and any organisation-specific rules. Here is a template that has worked well in practice.

# Project Name

## Build and Test

- Build: `npm run build`

- Test: `npm test`

- Lint: `npm run lint`

## Coding Standards

- TypeScript strict mode. No `any` types.

- All functions must have JSDoc comments.

- Use named exports, not default exports.

## Project Structure

- `src/` - Application source code

- `tests/` - Test files, mirroring src/ structure

- `docs/` - Documentation

## Organisation Rules

- Never commit directly to main. Always use feature branches.

- All API keys come from environment variables. Never hardcode secrets.

- Use British English in comments and documentation.

Store this template in a shared location, perhaps a developer-experience repository or an internal wiki. Encourage teams to extend it with project-specific details but not to remove the organisation-level sections.

For advanced CLAUDE.md strategies across complex repositories, including how to structure them for monorepos and large codebases, see our guide on CLAUDE.md for monorepos.

Measuring Adoption and Success

You cannot improve what you do not measure. For a Claude Code rollout, there are four categories of metrics worth tracking.

Adoption metrics. How many developers have Claude Code installed? How many used it in the last seven days? What is the trend over time? A healthy rollout shows steadily increasing weekly active users with low churn after the first two weeks.

Usage metrics. How many sessions per developer per day? What is the average session length? Which tools are used most frequently? These tell you whether developers are integrating Claude Code into their daily work or just opening it occasionally.

Quality metrics. What is the error rate for tool calls? How often do developers deny permission requests? What is the ratio of accepted to rejected suggestions? High denial rates suggest that your permissions model is too permissive or that developers do not trust the AI's judgement.

Satisfaction metrics. Run a short survey (five questions maximum) at the two-week mark and then monthly. Ask about productivity impact, frustration points, and features they wish existed. Qualitative feedback catches issues that metrics miss.

If you set up the audit hooks described earlier, you already have the raw data for the first three categories. Build a simple dashboard that your platform team can review weekly. Share the high-level numbers with leadership monthly. Concrete metrics make the case for continued investment better than any anecdote.

A dashboard worth keeping has five panels and fits on one screen. Weekly active developers, measured as unique user IDs that produced at least one PostToolUse event in the last seven days. Session count per active developer, so you can distinguish daily users from occasional users. Tool-call distribution as a stacked bar by tool name, which surfaces whether developers are actually using Edit and Write or mostly reading. Error rate as a single time-series line, broken out by error class so a spike in permission denials looks different from a spike in API rate-limit errors. Spend per active developer over time, pulled from the Anthropic billing export and divided by active seats, not total seats, because the per-seat average is what you report to finance.

Treat the dashboard as a product. Name an owner. Review it at the same time every week. If a metric moves and no one can explain why, that is the week's investigation, not a meeting to schedule for next month. The platform teams that lose control of a rollout almost always lose it quietly: a metric drifts for three weeks, nobody notices, and by the time someone asks, the original cause has moved on. A fifteen-minute standing weekly review catches drift before it becomes a story.

Share a simpler version with leadership. Two numbers is enough for a monthly engineering update: weekly active developers and the current cost per active developer. If both are heading the right way, nobody asks follow-up questions. If either one is not, you are the one holding the data, which is a much better position than hearing about it in a budget review.

Common Pitfalls and How to Avoid Them

The same mistakes appear across multiple rollouts. Here are the ones that cause the most damage.

Over-restricting permissions. This is the most common mistake. Platform teams, often under pressure from security, lock Claude Code down so tightly that it cannot do anything useful. If developers have to manually approve every file read, they will abandon the tool within a day. The acceptEdits permission mode exists for a reason. Use it.

Not providing training. Claude Code is not intuitive for everyone. Some developers will figure it out quickly. Others need to see examples of effective prompts, understand the permission model, and learn the keyboard shortcuts. Budget at least one hour for a hands-on onboarding session with each new cohort. Record it so future cohorts can watch asynchronously. Structure the session around three live demonstrations: a debugging workflow where Claude traces through unfamiliar code to find a bug, a code review using git diff main...HEAD piped into Claude, and a CLAUDE.md walkthrough showing how project rules translate into changed AI behaviour. These three scenarios cover the majority of daily use cases and give developers a concrete starting point rather than an abstract overview.

Ignoring feedback from the pilot. The pilot team's feedback is not optional. If they tell you the configuration is frustrating, believe them. If they tell you certain hooks are slowing them down, investigate. The purpose of the pilot is to learn, not to validate a predetermined plan.

No CLAUDE.md standards. When every project has a different (or missing) CLAUDE.md file, Claude Code behaves inconsistently across your codebase. Developers lose trust because the AI works brilliantly in one repository and terribly in another. A shared template solves this.

Skipping the feedback loop. A rollout is not a one-time event. It is an ongoing process. Schedule monthly reviews where you look at usage metrics, read survey responses, and adjust your configuration. The teams that get the most value from Claude Code are the ones that continuously refine how they use it.

Treating the rollout as a one-off project with a finish date. The mistake that hurts the most in retrospect is declaring victory the day you hit organisation-wide availability. Adoption is not a deploy. Usage patterns shift as developers find new workflows, model versions change how tool calls behave, and new hires need onboarding. Assign a permanent owner for Claude Code inside the platform team with maybe ten percent of their time, not a project team that disbands at phase three. Without that owner, the managed settings file goes stale, the CLAUDE.md template rots, and the audit dashboards stop getting looked at. Ninety days after phase three, a good owner is asking whether the defaults still make sense and whether the cost per active developer has moved in the right direction. A bad rollout forgets to ask.

Cost Management at Scale

Cost is the concern that keeps engineering managers up at night during an AI tool rollout. Here is how to approach it effectively.

First, set expectations. Claude Code Pro is $20 per month per seat. Max plans are $100 or $200 per month, depending on the tier. Enterprise plans have custom pricing. Know which plan each developer needs before you start.

Not everyone needs the Max plan. Many developers will get excellent results on Pro, especially if you configure the default model to use Claude Sonnet rather than Claude Opus for routine tasks.

Second, use model selection policies in your managed settings. Set the default model to claude-sonnet-4-20250514 for everyday work. Developers who need Opus for complex tasks can override this per-session, but the default should be the most cost-effective model that delivers good results. Sonnet handles the vast majority of coding tasks, including file editing, code generation, and debugging, at a fraction of the Opus cost.

Third, monitor usage patterns. Your audit logs can tell you which developers are generating the most API calls and whether that usage is productive or wasteful. If someone is running Claude Code against the entire codebase repeatedly without clear purpose, a conversation about effective usage patterns is more productive than a hard spending cap.

Fourth, consider team-level budgets. Some organisations allocate a monthly API budget per team and let the team lead manage how it is distributed. This creates natural accountability without micro-managing individual developers.

Fifth, educate developers on cost-aware usage. Simple habits make a big difference. Using Sonnet for exploration and switching to Opus only for complex reasoning tasks. Writing clear, specific prompts instead of vague ones that require multiple rounds. Using CLAUDE.md to provide context that reduces the number of file reads Claude needs to make. For the specific settings and budget tactics, see our guide on Claude Code cost optimisation.

Organisation Management in the Claude Console

Rolling out Claude Code is half a client-side exercise, half an account-management exercise. The client-side half is what the previous sections covered. The account half lives in the Claude console under console.anthropic.com, and it is the half that trips people up when they try to add their fiftieth developer.

To create a Claude organisation, sign in to the console and use the workspace switcher to add a new workspace. Name it after the company or the business unit, not the person creating it, because the workspace name shows up in billing, audit logs, and SSO metadata. A personal name there becomes a problem later.

To change or switch Claude organisation as an end user, developers click the workspace selector in the top-left corner of the console or pass the relevant workspace ID when they authenticate Claude Code. If a developer belongs to more than one workspace, the first claude /login command prompts them to pick one. They can switch later with claude /logout followed by claude /login.

To change the primary owner or manage organisation members, open Workspace Settings, then Members. Only workspace owners can promote, demote, or remove other members. Make sure two people hold owner rights before you fly anyone off on holiday, otherwise a sudden departure means a support ticket to Anthropic to recover access.

Provision your developer seats against this workspace before the rollout starts. Nothing kills day-one momentum faster than a developer who runs claude for the first time, hits an authentication screen, and is told their account has no access.

Troubleshooting a Claude Code Rollout

The same issues come up across every rollout. Keep this list in the onboarding runbook so the first developer who hits each one does not become a blocker for the rest.

"API error: rate limit reached" from Claude Code. This is almost always a shared-tier issue, not a personal one. Check whether multiple developers are hitting the same workspace rate limit from CI jobs at the same time. Move CI traffic to a separate API key with its own budget, set ANTHROPIC_API_KEY per environment, and stagger scheduled jobs. If the errors persist for individuals, the managed settings block needs a lower default max_tokens so one runaway session cannot drain the shared pool.

api_timeout_ms in Claude Code. Increase the timeout via managed settings if developers on slower corporate networks see repeated aborts on long model responses. The default is usually fine; only change it if you can correlate the timeouts with the network path, not with a specific prompt.

"Claude Code always asking for permission." The developer's effective permission mode has fallen back to ask. Check three places in order: the managed settings file (did the MDM push actually land), the user settings at ~/.claude/settings.json, and the project settings at .claude/settings.local.json. Project-level files override user-level files but not managed settings, so a stray "defaultPermissionMode": "ask" at the project level on a forked repo will frustrate a specific developer without affecting their neighbour.

"Claude code configuration file location" confusion. Developers looking for the config file often cannot find it because there are three valid locations: managed (/etc/claude/managed-settings.json or the macOS and Windows equivalents), user (~/.claude/settings.json), and project (.claude/settings.json in the repo root). Document all three in the runbook and explain the override order. Do not leave developers to discover the hierarchy from error messages.

"Claude allow all permissions" requests from developers. Someone will ask for bypassPermissions mode on day three. The honest answer is that it is available per-project and per-session, not as a global default, and that acceptEdits mode gets them most of what they want with far less risk. If a developer has a legitimate reason, allow it at the project level inside an internal tools repo, never in a production services repo.

"Claude code cheap" or "cheapest way to use Claude code" conversations. These searches usually come from developers who have just had their first bill. The answer is a combination of Sonnet as default, shorter CLAUDE.md files to trim context, and clearer prompts so the model does not burn tokens exploring. The claude code cost optimisation section earlier in this guide expands on the specific settings.

Keep a single shared document that captures each new issue the first time it appears, the symptom, the root cause, and the fix. By phase two of the rollout, this document is worth more than any vendor documentation, because it is written in the exact vocabulary your developers use.

The Lesson

The most important lesson from rolling out Claude Code across an organisation is that it is not a technology deployment. It is a behaviour change initiative. The technology is the easy part. Getting developers to change how they work, trust an AI assistant with their codebase, and build new habits around prompt-driven development is the hard part.

Phased rollouts work because they give people time to adapt. Managed settings work because they remove the burden of configuration from individual developers. Audit hooks work because they give the organisation visibility without surveilling individuals. CLAUDE.md standards work because they create consistency that builds trust.

But none of these technical measures matter if you skip the human elements. Training, feedback loops, internal champions, and visible leadership support are what turn a tool deployment into a genuine shift in how your organisation develops software.

Start small, listen carefully, iterate constantly, and expand when you are confident. That is the playbook.

Conclusion

Rolling out Claude Code across an organisation is a journey that takes weeks, not days. You start with a pilot team of three to five developers, configure managed settings for central control, set up hooks for audit visibility, and expand in phases from department to organisation-wide.

The technical configuration, including permissions models, audit hooks, and CLAUDE.md standards, is essential but not sufficient. You also need training, feedback loops, cost management strategies, and a willingness to adjust your approach based on what you learn.

If you are starting this journey, begin with your managed settings configuration. The guide on enterprise Claude Code with managed settings covers the full settings hierarchy and advanced patterns. For the hooks that power your audit trails, the Claude Code hooks and workflows guide walks through every event type and hook configuration in detail.

The organisations that get the most from Claude Code are not the ones that deploy it fastest. They are the ones that deploy it most thoughtfully. Take the time to do it right, and the results will speak for themselves.

Further Reading

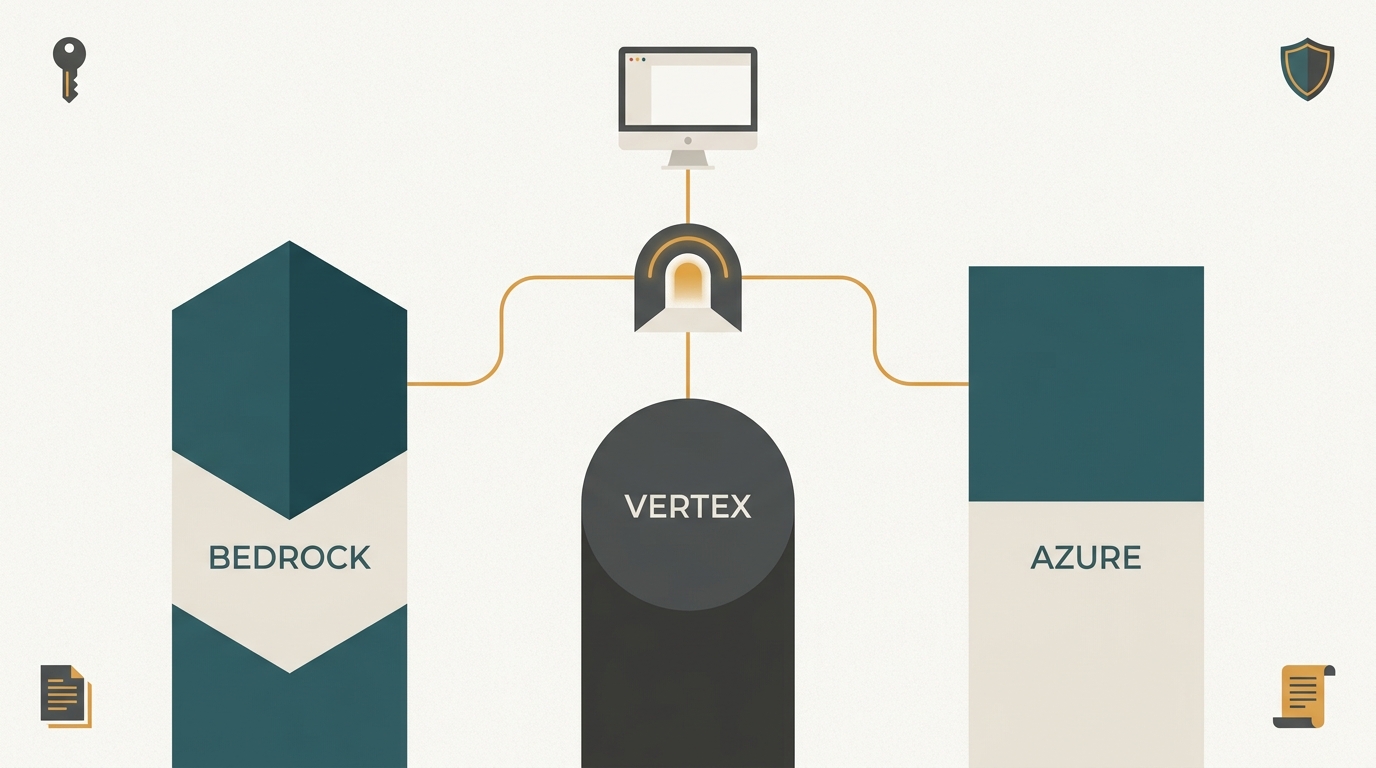

- Run Claude Cowork on Your Own AI - The Cowork sibling of this playbook. Covers the five MDM policy keys Cowork reads, the signed-manifest model for preinstalling plugins and skills, and how to point Cowork at Bedrock, Azure, Vertex, or a self-hosted inference cluster. Deploy both together and the desktop client and the CLI run under the same governance boundary.

- Enterprise Claude Code Managed Settings - Deeper treatment of the managed-settings.json file, MDM deployment, and the permissions hierarchy that underpins this playbook.

- Awesome AI Agent Governance - A curated list of tools, frameworks, and standards for governing AI agents in production.