Own how your organization uses AI.

One self-hosted binary governs inference, auditing, evals, and every tool call across your AI fleet. Native integration with Claude Cowork. Works with any agent, any model, any provider.

Govern. Every tool call.

Every tool call passes four sub-millisecond Rust checks at the handler boundary: permission, secret scan, blocklist, rate limit. Role, department, and per-entity rules decide what passes. A denied call never reaches the model.

- Handler-boundary RBAC. Every tool call checked before the subprocess spawns.

- User, session, and trace headers propagated on every request.

- Secret scanning on inbound prompts and outbound responses.

- Per-endpoint rate limits. Inference, tool calls, and MCP all configured independently.

- Tool schemas validated at registry load. A mismatched schema fails startup, not a customer call.

Prove. Every decision.

When the auditor asks what AI did and who authorised it, you run one query against audit_events. Full lineage from AI request to tool call to MCP execution to cost, linked by trace_id to a real authenticated identity. Structured evidence, not policy documents.

- One audit_events row per AI request, tool call, and MCP execution. Written before the response returns to the caller.

- Structured JSON events for Splunk, ELK, Datadog, and Sumo Logic. Forward it or query directly.

- Cost tracking by model, agent, department, and user.

- Every action linked by trace_id to a JWT-verified identity.

- CLI audit queries. Search, filter, export CSV. No ETL, no external log collector.

Plug in. To Claude Cowork.

Point your Claude Cowork fleet at a /v1/messages endpoint you operate. One self-hosted binary runs the gateway, the signed MCP allowlist, and the role-scoped org-plugins mount. On your network, in your database, under your audit trail.

- /v1/messages gateway pointed at any upstream you register in config.yaml. Your own inference cluster, a self-hosted Llama or Qwen, or a commodity provider (Bedrock, Vertex, Azure, Anthropic, OpenAI, Gemini, Groq).

- Signed MCP allowlist. Central, per-principal, revocable, MDM-distributable.

- org-plugins mount with role-scoped distribution and signature verification.

- Every tool call written to audit_events and linked by trace_id before the response returns.

- MDM rollout without Developer Mode. Ship the gateway URL and the binary, done.

Every Cowork capability. On infrastructure you own.

One self-hosted binary delivers the full Claude Cowork feature set and three capabilities only a binary you run can unlock: any inference provider at any cost, data that never leaves your network, and a compliance boundary you control end-to-end.

| Feature | Claude Enterprise | Claude Custom | Claude Custom + systemprompt.io Self-hosted binary |

|---|---|---|---|

| Core Cowork features | |||

| Chat, file uploads, tool use | Available | Available | Available |

| Local filesystem access | Available | Available | Available |

| Local and remote MCP | Available | Available | Governed, signed, audited |

| Skills and plugins | Available | Local or admin-pushed to user machines | Signed, per-user, central registry |

| Memory | Available | Available (local) | Available (local) |

| Web search | Available | Available on Vertex and Azure | Available on any configured provider |

| VDI support | Available | Available | Available |

| Inference & cost | |||

| Inference provider | Anthropic only | Bedrock, Vertex AI, or Azure Foundry (Claude only) | Any /v1/messages endpoint. Claude, OpenAI, Gemini, Groq, or self-hosted Llama / Qwen |

| Model choice | Claude models only | Claude models only (via cloud provider) | Any model. Route per department, task, or cost rule |

| Inference cost floor | Anthropic list pricing | Cloud provider markup on Claude | Your negotiated rate, including zero-marginal-cost on-prem inference |

| Per-user rate limits | Available | Blanket limits; per-user via gateway | Per-user, per-team, per-model at the gateway |

| Data provenance & ownership | |||

| Data residency | Anthropic US infrastructure | Cloud provider region (Bedrock / Vertex / Azure) | Your datacenter, your jurisdiction. Air-gap capable |

| Prompt & completion storage | Stored on Anthropic infrastructure | Stored in cloud provider + OTel export | PostgreSQL you own. Nothing leaves your network |

| Vendor exit | Lose everything when you leave | Lose everything when you leave | Keep the binary, the database, and the data |

| Compliance & sovereignty | |||

| SOC 2 / ISO 27001 / HIPAA | Anthropic's certifications cover the vendor, not your data | Cloud provider's certifications | Architecture supports your own SOC 2, ISO 27001, and HIPAA programs |

| EU / UK data sovereignty | US-based | Cloud-region dependent | Full EU / UK residency. You choose the region |

| Air-gapped deployment | Not available | Not available | Single binary, zero outbound calls |

| Identity-bound audit trail | Available (Anthropic-held) | OpenTelemetry only | Prompt → tool → MCP → cost, linked by trace_id, in your DB |

| Admin & governance | |||

| Account management UI | Available | Not available | Available. Admin dashboard and CLI |

| Projects and org sharing | Available | Not available | Department-scoped skills and plugins |

| Compliance and analytics APIs | Available | Not available | audit_events, cost ledger, CSV export |

| Skills and plugin marketplace | Available | Not available | Internal marketplace with review and fork |

| OpenTelemetry export | Available | Available | Available. Plus structured JSON to your SIEM |

Baseline rows from Anthropic: Use Claude Cowork with third-party platforms

Governance you can prove

When the auditor asks what AI did and who authorised it, you query the answer. Every call traced, every secret isolated, every action logged as structured evidence.

Air-Gapped Deployment

A single Rust binary that is its own token issuer and validator. No external calls, no data leaving your network. Plug your existing IdP in and the binary handles the rest.

Single binary, zero outbound connectionsSecrets Never Touch Inference

Secrets flow through MCP services, not inference endpoints. The agent calls the tool, the MCP service injects the credential server-side. No secrets in context windows, no secrets in logs.

Server-side credential injection via MCPIdentity-Bound Audit Trails

Every tool call is tied to the authenticated user, timestamped, and stored as structured JSON. Full lineage from request to tool call to MCP execution, linked by trace ID.

User-bound structured audit log per tool callEvent Hook Infrastructure

Lifecycle event hooks track every stage of tool execution, from session open to subagent completion. Hook data flows to your SIEM, logging pipeline, or custom handlers for monitoring and alerting.

Event hooks across the tool lifecycleData-Domain Scoping

Skills, plugins, and MCP servers scoped by role and department. Finance sees finance tools. Engineering sees engineering tools. Down to which MCP servers are even visible.

Role + department scoped tool surfacesFull Data-Plane Ownership

Your infrastructure, your database, your compliance boundary. Auditable Rust source code under BSL-1.1. No data leaves your network unless you configure it to.

Self-hosted with auditable sourceArchitecture supports SOC 2, ISO 27001, and HIPAA compliance programs. Informed by OWASP Top 10 for Agentic Applications.

The complete AI governance stack

Everything you need to standardise, govern, and observe AI usage across your organisation. One library, one binary, one control plane.

Governance Pipeline

Four checks on every tool call before the subprocess spawns. Scope, secret scan, blocklist, rate limit.

→MCP Governance

Per-server OAuth2. Central MCP registry with health monitoring. Every tool call audited before the subprocess spawns.

→Secrets Management

Encrypted at rest, per-user key hierarchy. Secrets never touch inference.

→Analytics & Observability

Audit trails, cost attribution, SIEM integration. Structured JSON for Splunk, ELK, Datadog, and Sumo Logic.

→No Vendor Lock-In

Open formats, Git portability, lossless export. Your skills, your data, your choice.

→Compliance

Architecture supports SOC 2, ISO 27001, and HIPAA compliance programs. Informed by OWASP Top 10 for Agentic Applications.

→Claude Cowork Integration

The governance plane for Cowork on third-party inference. /v1/messages gateway, OAuth helper binary, signed MCP allowlist, org-plugins supply chain, full audit trail. One self-hosted binary.

→Cowork Plugin (OAuth2)

One-click OAuth2 install for hosted Claude Desktop. Your governed skills, synced automatically.

→Skill Marketplace

Curated library of your organisation's AI knowledge. Browse, install, create, and fork skills in 30 seconds.

→Self-Hosted Binary

Single 50MB Rust binary. Air-gapped, stateless, PostgreSQL only. Your infrastructure, your data.

→Deep-Dive Technical Guides

Enterprise AI governance and Claude Code resources backed by real production experience.

Run Claude Cowork on Your Own AI

Run Claude Cowork on any AI you operate. Bedrock, Azure, or a self-hosted Llama, with signed plugins, MDM rollout, and per-user JWT audit on infrastructure you own.

Read guide

AI Governance Platform Guide

What makes a real AI governance platform. Deployment models, compliance coverage, and the Gartner category mapped to implementation.

Read guide

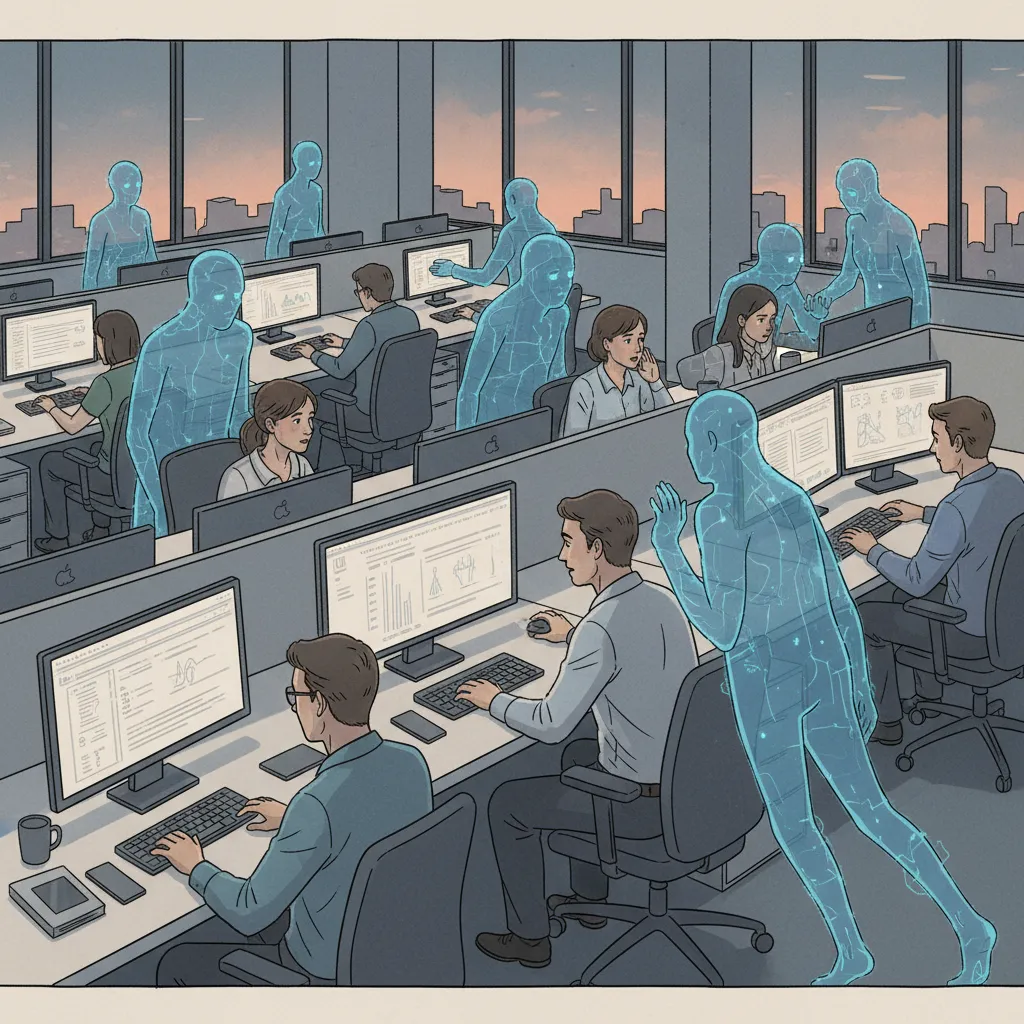

Shadow AI: The Hidden Risk

68% of employees use AI without IT approval. How to detect, govern, and manage unsanctioned AI usage across your organisation.

Read guideWhat CTOs ask us

"Can we run Cowork on our own inference?"

Yes. Every Cowork chat turn routes through one gateway you operate. Pick any upstream: Bedrock, Azure OpenAI, Vertex AI, a self-hosted Llama or Qwen, or your own internal model. Prompt caching, extended thinking, and tool use survive the routing, even when the upstream speaks a different wire format.

Cowork never knows it isn't talking to Anthropic. You pick the provider, you keep the audit trail.

"How do we preinstall plugins and skills across the whole team?"

Publish a signed manifest at the gateway. Every enrolled device picks up the same plugin set, skill catalogue, and approved MCP server list on its next sync. End users never run an install command. Finance sees a finance catalogue, engineering sees an engineering one, and nothing crosses.

Ship a plugin to the whole fleet by publishing one file. Revoke it the same way.

"Do we get full visibility into AI agent activity?"

Every Cowork chat turn and every tool call it triggers lands in one audit table with the user, the trace, the model, the tokens, and the cost. Structured JSON also streams to Splunk, ELK, Datadog, or Sumo Logic on the same topic.

Prompt, tool call, MCP execution, and cost joined in one query. Per user, per model, per upstream.

"What about data sovereignty and air-gapped environments?"

Single Rust binary, self-hosted, air-gap capable, no external dependencies beyond PostgreSQL. Nothing calls anthropic.com. Pre-seeded sync supports genuinely offline fleets; signed manifests and keys stay inside the security boundary.

Zero outbound connections. Your infrastructure, your data, your jurisdiction.

"How do we enforce consistent AI policies across teams?"

The governance pipeline runs scope check, secret scan, blocklist, and rate limit on every tool call at the handler boundary, before the subprocess spawns. Role and department rules decide what passes. A denied call never reaches the model.

One policy layer across every team, every agent, every upstream provider.

"Can we actually track AI costs across providers?"

Every audit_events row carries provider, model, token counts, and cost in microdollars. Group by user, department, model, or upstream and compare providers in one aggregation. Your negotiated Bedrock rate versus list-price Anthropic, side by side.

"What did we spend on Bedrock vs direct Anthropic in April 2026?" is a one-line query.

"We have developers. Why not build it ourselves?"

The Cowork extension points are moving targets. Anthropic reshapes the credential-helper contract, the manifest signing model, and the MCP allowlist format on every release. An in-house governance layer means a permanent team tracking that surface. The binary absorbs the churn so your team does not have to.

You own the binary and the deployment. You do not own the maintenance burden.

Frequently asked questions

Where does our data go?

Nowhere you don't control. systemprompt.io runs on your infrastructure as a self-hosted binary. Data flows through your servers to your configured AI providers. Source code is auditable under BSL-1.1. Nothing leaves your network unless you configure it to.

How does this fit into our existing security stack?

Every governance decision, tool call, and session event is emitted as structured JSON. Forward it to your existing SIEM, logging pipeline, or alerting system. The binary also stores everything in PostgreSQL for direct querying from the dashboard and CLI.

What happens when AI providers ship breaking changes?

systemprompt.io absorbs that complexity. Provider APIs, plugin architectures, and protocol specs change constantly. The governance layer adapts so your policies, audit trails, and access controls remain stable regardless of what changes underneath.

Can we enforce different policies for different teams?

Yes. Role-based access control with department scoping. Skills, plugins, and MCP servers are scoped per role and per department. Engineering sees engineering tools. Finance sees finance tools. Policies are defined centrally and enforced consistently.

Does this lock us into a single AI provider?

No. systemprompt.io is provider-agnostic. It supports multiple model providers through a unified governance layer. Switching providers requires configuration changes, not rewrites. Your governance policies, access controls, and audit trails remain intact.

What does deployment actually look like?

A single 50MB compiled Rust binary with PostgreSQL. No containers required, no microservices, no external dependencies. Air-gap capable. Deploy to your own servers, connect to your database, and the system is running. Branded sandbox to production in days, not months.

How does licensing work?

The underlying library is licensed under the Business Source License (BSL-1.1). You can evaluate it for free with no time limit. Production use requires a commercial licence, which is fully negotiable. Contact us to discuss terms that fit your organisation.

Who owns the code we build on top of it?

You do. All implementation code, extensions, skills, configurations, and customisations are your intellectual property. You only need a licence for the underlying systemprompt.io library itself. Everything you build on top of it belongs to your company.

Let's talk

your implementation

Discuss technical implementation, enterprise licensing, or custom integrations with the founder. For teams that have evaluated the template and are ready to move forward.

-

Technical implementation Deployment architecture, IdP integration, SIEM pipelines, and custom extensions

-

Enterprise licensing Volume licensing, SLA guarantees, and perpetual licence terms under BSL-1.1

-

Custom integrations Rust extensions, custom governance rules, and provider-specific configurations

You're in. Check your inbox.

We've sent you an email with a link to book your 30-minute call. Check your inbox.

While you wait: How to roll out Claude across your organisation