Prelude

Agent teams use approximately 7x the tokens of a single-session approach. Anthropic's own C compiler project ran 16 agent teams, consumed roughly 2 billion input tokens, and cost an estimated $20,000 in API usage.

That number is not a warning label. It is the price of genuine parallelism. The question is not whether agent teams are expensive. They are. The question is whether the work you are doing justifies the multiplier, and whether you know enough about the failure modes to avoid the common ways developers burn through their budget with nothing to show for it.

This guide covers the patterns that justify the cost, the failure modes that do not appear in the documentation, and the specific configurations that practitioners have figured out through expensive trial and error.

The Problem

The single-conversation approach works well until it does not. The breaking point is not speed. It is context quality.

LLM reasoning quality degrades as the context window fills. Practitioners report that effective performance starts declining at 50-60% context utilisation, well before the theoretical limit. A conversation at 200K tokens is not just expensive. It produces worse output than a fresh conversation at 20K tokens, because the model is spreading attention across irrelevant history from three tasks ago.

The standard advice is to use /clear between tasks and /compact when context grows. This works for sequential workflows. But when you need three modules refactored, four test files written, and a code review run, sequential execution means each task waits for the previous one. The accumulated context from early tasks degrades the quality of later ones, even after compaction.

Agent teams solve this by giving each task a fresh context window. The trade-off is real: you pay more tokens for better reasoning per task. The skill is knowing when that trade-off is worth it, and the specific failure modes that can turn a 7x multiplier into a 70x one.

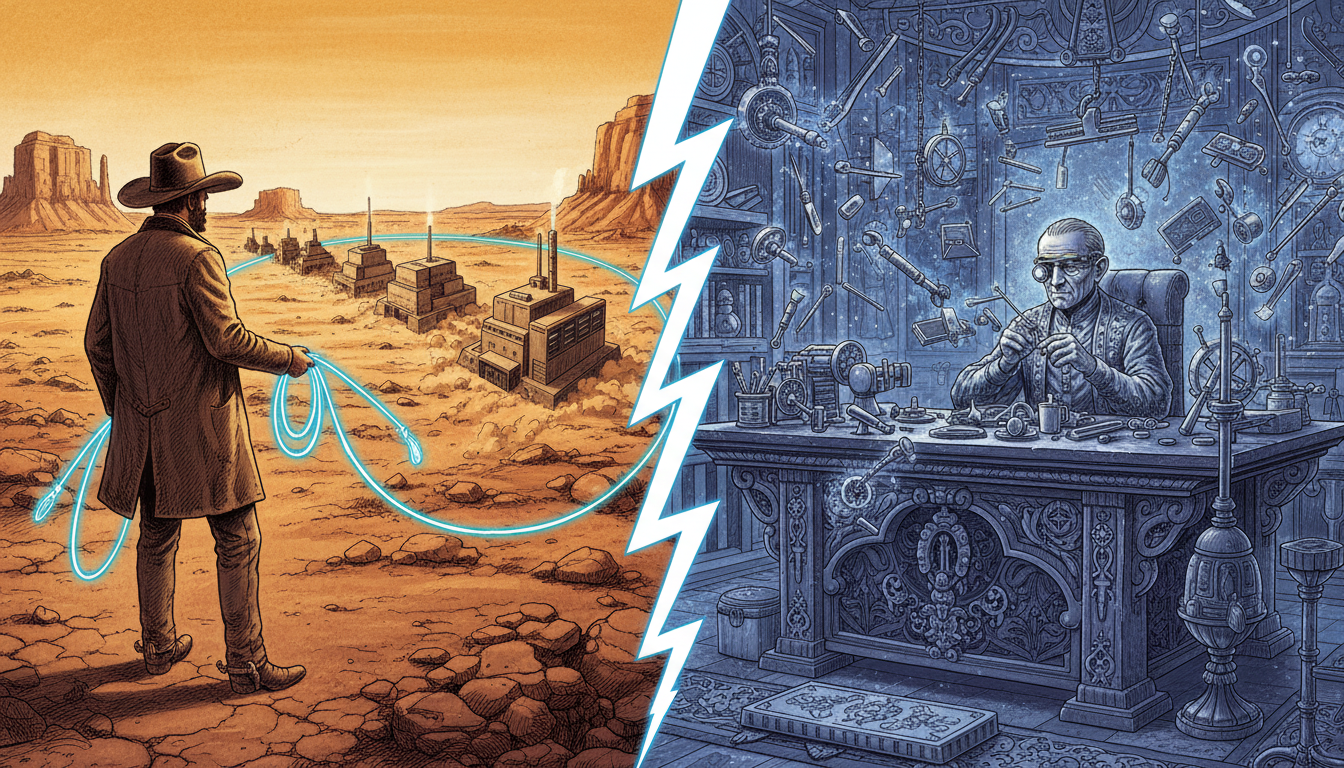

The Journey

The Cost Reality

Before committing to agent teams, understand what you are signing up for.

The token multiplier. Each agent spawns with its own context window and loads the full system prompt. Three parallel agents means 3x the base context cost before any work begins. Agent teams (the experimental multi-session feature) use roughly 7x tokens of a single-session plan. Subagents (single-session spawns) use 3-4x.

The rate limit trap. Claude enforces both monthly spending limits and per-minute throughput limits (requests per minute, input tokens per minute, output tokens per minute) independently. Low quota usage does not protect you from throughput limits. Five parallel Opus agents launching simultaneously can trigger per-minute throttling even if your monthly spend is at 6%. The usage dashboard shows quota, not throughput, so you get throttled without warning. The fix: stagger agent launches by 30-60 seconds instead of spawning them simultaneously.

The plan minimum. On the Pro plan ($20/month), 5 parallel subagents can exhaust the usage limit in 15 minutes. Agent teams are a Max plan minimum feature for any serious use. API users need to set explicit spending limits to avoid surprise bills.

The worst case. One documented case involved 49 parallel subagents consuming 887,000 tokens per minute over 2.5 hours. The estimated cost was $8,000-15,000 for a single session. This happened because the developer did not constrain spawning and the model kept creating subagents for every small batch of files.

None of this means agent teams are too expensive. It means they require budget discipline. Set max_turns to limit tool-use turns per agent. Keep teams to 3-5 agents. Clean up idle agents immediately. Treat agent teams as a constrained resource, not unlimited parallelism.

{

"maxTurns": 20,

"model": "claude-opus-4-5"

}

For a complete breakdown of Claude Code pricing and cost management, see the cost optimisation guide.

When Agent Teams Actually Help

Agent teams justify their cost in specific situations. The pattern is consistent: they work when the work is genuinely parallel, file-isolated, and benefits from independent reasoning.

Parallel code review. Launch three agents to review the same PR from different angles: security, performance, and test coverage. Each agent brings a fresh perspective without being anchored by the others' findings. This is strictly better than sequential review because the second reviewer is not biased by the first reviewer's observations.

Multi-module features. When a feature touches frontend, backend, and database layers with clear file boundaries, each agent can own one layer. The key constraint is file isolation: if two agents need to edit the same file, use worktrees or sequential coordination.

Debugging with competing hypotheses. The debate pattern. Launch multiple agents, each investigating a different theory about the root cause. This counteracts confirmation bias, which is the most expensive failure mode in debugging. Once a single agent explores one theory, that theory anchors all subsequent investigation. Multiple independent investigators find root causes faster because they are not influenced by each other's paths.

Cross-codebase research. Explore agents reading different parts of a large codebase in parallel. Each returns a summary to the parent conversation. The parent context receives five concise summaries instead of 50 file contents.

The deciding question. Before spawning agents, ask: are the subtasks genuinely independent, and do they touch different files? If yes to both, agents help. If either answer is no, a single conversation with /clear between tasks is cheaper and often faster.

The Failure Modes Nobody Warns You About

These are the problems that practitioners discover after their first expensive agent session. Each one has cost real developers real money.

The amnesia problem. In long-running Agent Teams sessions, when the lead agent's context fills up and auto-compaction triggers, it can lose awareness of its teammates. Unlike CLAUDE.md content which persists across compaction, team configuration and state are not re-injected into the lead's context after summarisation. The lead stops sending messages, cannot reassign work, and behaves as if the team does not exist. No error message appears. This is an open issue (GitHub #23620) specific to the experimental Agent Teams feature, not subagents. The mitigation: create explicit handoff documents before context reaches high utilisation, and keep individual team sessions focused enough to complete before the lead's context fills up.

Opus over-spawning. Opus has a documented tendency to delegate tasks that should be done directly. It will spawn a subagent to fix three files when doing it inline would be faster and cheaper. Each unnecessary subagent inherits the full system prompt (roughly 120K tokens of base overhead). If you notice Claude spawning agents for trivial changes, tell it explicitly: "do not use subagents for this, handle it directly." Anthropic's own prompt engineering guidance flags this behaviour.

The worktree discovery gap. Agents running in worktree isolation do not discover custom agent definitions from .claude/agents/. The worktree's .claude/ directory only gets settings.local.json, missing skills, agents, docs, and rules. If your workflow depends on custom agents, worktree-isolated agents will not have access to them.

Missing dependencies. Worktrees are fresh git checkouts. node_modules, Python virtual environments, compiled artifacts, and any untracked dependencies do not carry over. An agent that spawns in a worktree and tries to build will fail until it runs the project's setup commands. Include explicit setup instructions in the agent's prompt if worktree isolation is needed.

Infinite spawning loops. Agents spawning sub-agents can create cascading chains that consume memory and tokens until the process runs out of RAM. This is documented in GitHub issue #4850. The safeguard: subagents cannot spawn other subagents (this is enforced), but teams of agents can create complex coordination patterns that effectively loop.

Silent file corruption. When two agents edit the same file without worktree isolation, they overwrite each other's changes. This is not a merge conflict. It is data loss. The second agent's write replaces the first agent's work entirely, and neither agent is aware of the conflict. Strict file ownership (each agent touches different files) is not optional. It is required for correctness.

The 80/20 Pattern

The practitioners who report the best results from agent teams spend 80% of the workflow on planning and 20% on execution. This ratio sounds wrong until you consider the alternative: executing a bad plan in parallel multiplies the cost of the mistake by the number of agents.

Phase 1: Research with Explore agents. Launch 1-3 Explore agents to understand the codebase. Explore agents are read-only (they cannot edit files), safe (they cannot break anything), and cheap (run them on Sonnet or Haiku). Each agent focuses on a different aspect: one maps the module structure, another finds relevant test patterns, a third checks for existing implementations of what you are about to build. The parent conversation receives summaries, not raw file contents.

Phase 2: Plan with a Plan agent. Feed the research summaries into a Plan agent. The Plan agent designs the implementation: which files to change, what order, what the changes look like. Because it is working from summaries rather than raw files, its context is compact and its reasoning quality is high. Review the plan before proceeding.

Phase 3: Execute with implementation agents. Only after the plan is validated do you commit tokens to implementation. Each implementation agent receives a specific, well-scoped prompt derived from the plan. "Refactor error handling in src/api/users.rs to use AppError, following the pattern in the plan" is a specific prompt that produces first-attempt results. "Improve error handling in the API" is a vague prompt that produces exploratory work at Opus prices.

The handoff discipline. At each phase transition, create an explicit handoff document: a structured summary of what was learned, decided, and planned. Do not rely on auto-compaction to preserve the right information. Write it down in a .md file in .claude/ so any agent can read it.

# .claude/handoff-2026-04-16.md

## Research findings

- Module X uses pattern Y for error handling

- Three existing tests cover the auth flow

## Plan

- Agent A: refactor src/api/users.rs error handling

- Agent B: update tests in tests/api/

## Constraints

- Do not modify shared types in src/types.rs until both agents sync

For a complete look at how these phases fit into daily Claude Code usage, the daily workflows guide covers the foundational habits.

Context Management That Actually Works

Context management is the difference between agent teams that justify their cost and agent teams that waste money.

The 60% rule. Effective reasoning degrades well before the context window fills. Treat 60% utilisation as the practical limit for complex work. Beyond that, quality drops and the model starts losing track of earlier instructions. For agent teams, this means keeping each agent's task small enough that it completes within 60% of its context window.

Handoff documents over auto-compaction. When a long-running agent approaches context limits, do not wait for auto-compaction. Create an explicit summary of progress, decisions made, and remaining work. Save it to a file. Start a fresh agent with the summary as input. Auto-compaction preserves tokens but loses nuance. Explicit handoffs preserve exactly what matters.

CLAUDE.md as an index. Keep your CLAUDE.md under 50 lines. Use it as an index that points to detailed reference files in an agent_docs/ directory. Every line of CLAUDE.md is loaded into every agent's context. A 500-line CLAUDE.md across 5 agents means 2,500 lines of base context overhead. An index with 50 lines and on-demand detail loading is 10x more efficient. Some teams run under 30 lines. At systemprompt.io, the standard pattern for managing CLAUDE.md at scale is an index of under 50 lines pointing to skill-specific reference files.

Terse summaries from subagents. Each subagent result is appended to the parent conversation's context. If five subagents each return 2,000-token detailed reports, the parent gains 10,000 tokens of context from results alone. Structure subagent prompts to return concise summaries: "return a 3-sentence summary of your findings, not the full analysis."

Model Routing for Cost Control

Not every agent needs the most expensive model. Model routing across agents is one of the most effective cost levers.

Explore agents on Haiku. File reading, grep searches, and codebase navigation work well on Haiku ($0.80/M input tokens). These tasks do not require deep reasoning. An Explore agent that reads 20 files and returns a summary does not benefit from Opus-level intelligence.

Implementation agents on Sonnet. Routine code generation, test writing, and mechanical refactoring run well on Sonnet ($3/M input tokens). Sonnet handles 80% of daily development work at one-fifth the cost of Opus.

Lead agent and complex reasoning on Opus. Reserve Opus ($15/M input tokens) for the lead agent's coordination decisions, complex architectural planning, and subtle debugging where getting it right the first time matters more than saving tokens.

| Model | Input $/MTok | Output $/MTok | Cost vs Opus (input) | Recommended agent role |

|---|---|---|---|---|

| Claude Haiku | $0.80 | $4 | 5.3% | Explore, grep, codebase navigation |

| Claude Sonnet | $3 | $15 | 20% | Implementation, test writing, refactors |

| Claude Opus | $15 | $75 | 100% | Lead coordination, architectural planning |

Data source: Anthropic pricing, as of 2026-04.

The stagger pattern. Do not launch all agents simultaneously. Stagger by 30-60 seconds to smooth the throughput curve and avoid rate limit walls. This is especially important with Opus, where each request consumes significant throughput capacity.

Agent Teams require Opus. The experimental Agent Teams feature (enabled via CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS) currently requires Opus for all teammates. Subagents within a single session can use any model.

Worktree Isolation: The Real Story

Worktrees provide genuine file isolation by creating separate git checkouts for each agent. Two agents can modify the same file without conflicts because they are working on different copies. When the agent finishes, its changes exist on a separate branch.

This is genuinely useful for speculative work. "Try three different approaches to this refactoring in parallel" becomes practical when each approach runs in its own worktree. You review all three branches and pick the best one.

But worktrees have real limitations that the documentation does not emphasise.

Custom agents are invisible. Agent definitions in .claude/agents/ are not copied to worktree checkouts. If your workflow relies on custom agents, worktree-isolated agents will not find them.

Dependencies do not follow. node_modules, Python virtual environments, compiled binaries, and any untracked files are not present in the worktree. Include setup commands in the agent prompt: "run npm install before making changes."

Cleanup risks. Worktrees with no committed changes are cleaned up automatically. This is usually correct, but if an agent did work and forgot to commit, the work is lost. Agents that encounter errors mid-task may leave uncommitted changes that get silently discarded.

Gitignore the worktree directory. Add .claude/worktrees/ to your .gitignore to keep worktree artifacts out of your repository status.

Subagents vs Agent Teams: Choosing the Right Tool

Claude Code offers two distinct multi-agent mechanisms. Using the wrong one wastes tokens.

Subagents are individual agents spawned within your conversation via the Agent tool. They run in your session, report results back to you, and have no awareness of each other. Use subagents for focused tasks (research a module, write tests for one file, review a diff), cost-sensitive work (3-4x token overhead), and situations where you do not need agents to communicate with each other. For understanding how subagents compare to other Claude Code extensibility patterns, the skills vs agents vs MCP guide covers the decision framework.

Agent Teams are an experimental feature that coordinates 2-16 separate Claude Code sessions with shared task lists and peer-to-peer messaging. Enable them by setting the environment variable or adding the flag to your settings:

# Environment variable

export CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS=1

# Or in settings.json

{

"experimentalAgentTeams": true

}

Teams currently run all teammates on Opus. Because teams are still experimental, expect rough edges: session resumption does not work with in-process teammates, task status can lag, shutdown can be slow, and context compaction can cause the lead to lose team awareness (GitHub #23620). Use teams for work that genuinely requires inter-agent discussion (debugging with competing hypotheses, design reviews), cross-layer coordination where agents need to signal completion to each other, and sustained parallel work where the coordination infrastructure justifies its overhead.

The 5-6 task rule. Whether using subagents or teams, keep each agent to 5-6 tasks maximum. Beyond that, the agent's own context fills up and quality degrades. Better to finish one set, summarise, and start a fresh agent.

Troubleshooting Common Failures

These are the error patterns practitioners hit first. Each has a specific cause and a specific fix.

"Rate limit exceeded" within minutes of launching. The per-minute throughput limit (tokens-per-minute, requests-per-minute) is separate from your monthly quota. Five Opus agents launching simultaneously can hit the TPM wall even at 5% monthly usage. Fix: stagger launches by 30-60 seconds, or drop exploration and implementation agents to Sonnet. The dashboard shows monthly quota, not TPM, so you will not see this coming in the billing view.

"Lead agent stopped responding to the team." Context compaction on the lead has silently dropped team awareness (GitHub #23620). The team processes keep running but the lead no longer sends messages or updates the task list. Fix: write an explicit handoff document to .claude/handoff-YYYY-MM-DD.md before the lead reaches 60% context, kill the lead, and start a fresh lead with the handoff as input. Do not rely on auto-compaction to preserve coordination state.

"Agent cannot find my custom skill or sub-agent definition." Worktree checkouts only inherit settings.local.json from .claude/. Custom agent files, skill manifests, and rules do not carry over. Fix: either run the agent in the main working tree, or copy .claude/agents/ and .claude/skills/ into the worktree as part of the agent's setup script.

"Build fails immediately in a worktree agent." The worktree is a fresh git checkout with no node_modules, no Python venv, and no compiled artifacts. Fix: include the project setup command in the agent prompt ("run npm install && npm run build before making changes"). For Python, activate or recreate the venv. For Rust, the target/ directory is fine to skip because cargo rebuilds from scratch.

"My worktree changes disappeared." Worktrees with no committed changes can be cleaned up automatically. If an agent errored mid-task and left uncommitted work, that work is gone. Fix: instruct agents to commit intermediate work with git commit --allow-empty-message -m wip before any risky operation. Review worktree branches before running cleanup.

"Two agents corrupted the same file." No worktree isolation plus overlapping file ownership. The second write replaces the first; no conflict marker appears because git is not involved in the in-memory edit. Fix: enforce strict file ownership in the initial plan. Each agent touches a disjoint set of paths. If overlap is unavoidable, move the overlapping work to worktrees and merge the branches after.

"Opus keeps spawning subagents I did not ask for." Opus has a documented over-delegation bias. Fix: add an explicit instruction to the agent's prompt: "Do not use subagents or the Agent tool for this task. Edit files directly." This reliably suppresses the behaviour.

Subagents vs Agent Teams at a Glance

| Dimension | Subagents | Agent Teams |

|---|---|---|

| Activation | Default, no flag needed | CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS flag |

| Session model | One session, spawned children | 2-16 separate sessions |

| Inter-agent messaging | None (children report to parent) | Peer-to-peer messaging, shared task list |

| Model constraint | Any model per subagent | All teammates on Opus |

| Token overhead vs single session | 3-4x | Roughly 7x |

| Plan minimum for serious use | Pro can work, Max recommended | Max plan minimum |

| Good for | Focused research, test writing, parallel review of one diff | Cross-layer feature work, competing-hypothesis debugging, design reviews |

| Known failure modes | Over-spawning, context pollution from verbose returns | Lead amnesia on compaction, session resume quirks, slow shutdown |

| Recovery if things go wrong | Kill parent conversation, start over | Kill lead, preserve teammate branches, start new lead from handoff doc |

Data source: Claude Code sub-agents docs and agent teams docs, as of 2026-04.

Use this as the first-pass decision. If a row in the Agent Teams column does not apply to your work, you probably want subagents.

When Not to Use Agent Teams

Agent teams are not universally better than single-conversation work. Several situations favour keeping everything in one context.

Sequential dependencies. If step B requires the output of step A, you gain nothing from parallelism. The second agent waits for the first, and you pay coordination overhead for zero speed benefit.

Same-file edits without worktrees. Two agents editing the same file without worktree isolation will silently corrupt each other's work. This is not a merge conflict that can be resolved. It is data loss.

Cost-sensitive work. A single session with /clear between tasks is 3-7x cheaper than agent teams for the same work. If budget matters more than speed, stay sequential. As Addy Osmani noted: "Single sessions remain more cost-effective for routine tasks."

Small tasks. Spawning an agent has fixed overhead: system prompt loading, context initialisation, and tool setup. For tasks that take 30 seconds in a single conversation, the spawn overhead makes agents slower, not faster.

Active debugging. Tracking a bug through a chain of function calls requires following one thread of logic. The accumulated context of a long debugging session is a feature, not a bug. You want the model to remember what it found ten questions ago. The hooks guide covers how to add automation within a single conversation for this kind of sequential work.

The Lesson

Agent teams are a power tool with a visible price tag. The 7x token multiplier is the honest cost of genuine parallelism, where each agent gets a fresh context window and independent reasoning capacity.

The practitioners who get value from agent teams share three habits. First, they spend 80% of the workflow on planning: Explore agents for research, Plan agents for design, explicit handoff documents between phases. The planning investment prevents the most expensive mistake, which is executing a bad approach in parallel across multiple agents. Second, they enforce strict file ownership. Each agent touches different files. No exceptions. Two agents on the same file without worktree isolation means data loss. Third, they monitor context at 60%, not 95%. They create handoff documents before auto-compaction triggers, because auto-compaction preserves tokens but loses coordination state.

The developers who waste money on agent teams also share patterns. They spawn too many agents for tasks that could be done directly. They let context fill to capacity and wonder why the lead agent forgot about its teammates. They skip the planning phase and jump straight to parallel execution, multiplying the cost of a bad approach by the number of agents.

The deciding question remains simple. Are the subtasks genuinely independent, file-isolated, and complex enough that fresh context improves the output? If yes, agent teams earn their cost. If not, a single conversation with /clear between tasks is cheaper, simpler, and often faster.

Conclusion

Agent teams are the most powerful and most expensive feature in Claude Code. Used correctly, they turn hours of sequential work into minutes of parallel execution. Used without discipline, they turn a $20 task into a $200 one.

Start with Explore agents for research. They are cheap, safe, and demonstrate the value of context isolation without the complexity of full agent coordination. Graduate to parallel implementation agents when you consistently find yourself waiting for one independent task to finish before starting the next. Use worktree isolation when you want to experiment without consequences, but test your setup first to confirm dependencies and custom agents are available.

If you are building standalone agents outside of Claude Code, the build a custom agent guide covers that path. For the foundational daily workflow habits that make agent teams productive, start with the daily workflows guide. For managing the cost of all of this, the cost optimisation guide covers the full picture including thinking token caps, context indexing, and model selection. The systemprompt.io skills system provides reusable agent definitions that work across single-session and team configurations without the worktree discovery gap.

The goal is not to use agents everywhere. It is to use them where fresh context and parallel execution produce better results at a justified cost.